A day or two after publishing my TL;DR on Wordle algorithms, I stumbled on a remarkable paper that neatly summarizes all the main ideas. The remarkable part is that the paper was written 50 years before Wordle was invented!

The paper is “Information Theory and the Game of Jotto,” issued in August of 1971 as Artificial Intelligence Memo No. 28 from the AI Lab at MIT. The author was Michael D. Beeler, known to me mainly as one of the three principal authors of HAKMEM (the others were Bill Gosper and Rich Schroeppel). Beeler later worked at Bolt, Baranek, and Newman, an MIT spinoff.

Wikipedia tells me that Jotto was invented in 1955 by Morton M. Rosenfeld as a game for two players. As in Wordle, you try to discover a secret word by submitting guess words and getting feedback about how close you have come to the target. The big difference is that JOTTO’s feedback offers only a crude measure of closeness. You learn the number of letters in your guess word that match one of the letters in the target word. You get no indication of which letters match, or whether they are in the correct positions.

The unit of measure for closeness is the jot. Beeler gives the example of playing GLASS against SMILE, which earns a closeness score of two jots, since there are matches for the letter L and for one S. Unlike the Wordle feedback rule, this scoring scheme is symmetric: The score remains the same if you switch the roles of guess and target word.

A defect of the game, in my view, is that you can max out the score at five jots and still not know the target word. For example, when a five-jot score tells you that the letters of the target are {A, E, G, L, R}, the word could be GLARE, LAGER, LARGE, or REGAL. Your only way to pin down the answer is to guess them in sequence.

Beeler’s main topic is not how the game proceeds between human players but how a computer can be programmed to take the role of a player. He reports that “A JOTTO program has existed for a couple of years at MIT’s A.I. Lab,” meaning it was created sometime in the late 1960s. He says nothing about who wrote this program. I’m going to make the wild surmise that Beeler himself might have been the author, particularly given his intimate knowledge of the program’s innards.

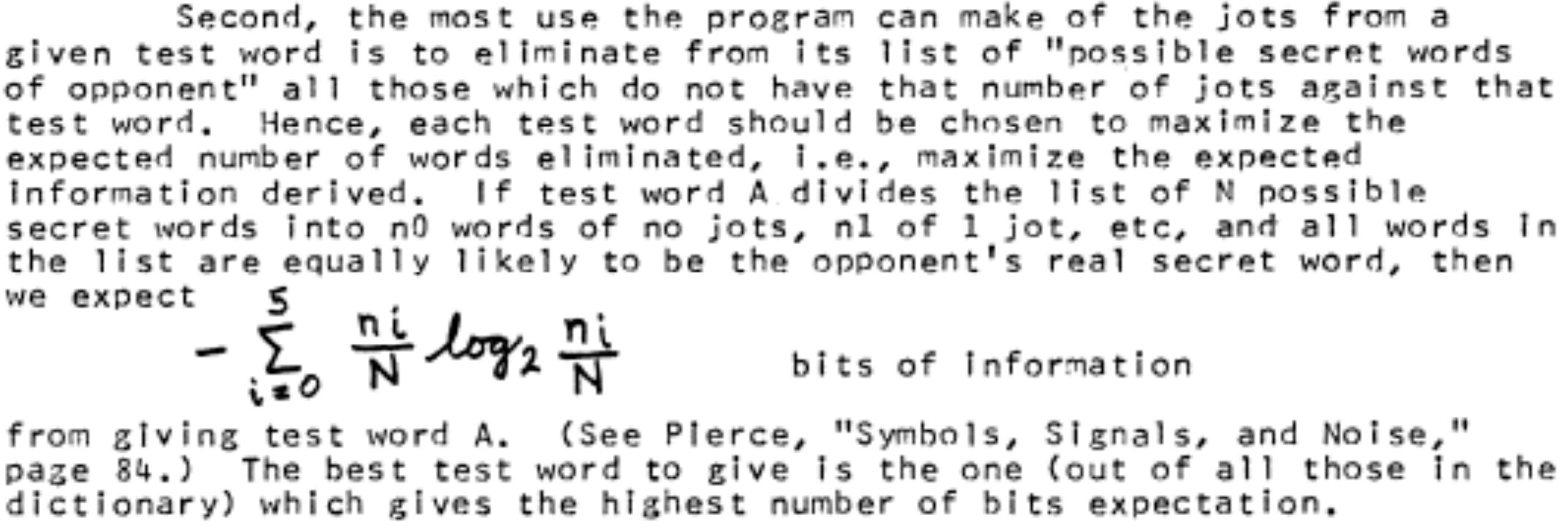

Here’s the crucial passage, lifted directly from the memo:

The strategy described here—maximizing the information gain from each guess—is exactly what’s recommended for Wordle. But where Wordle divvies up the potential target words into \(3^5 = 243\) subsets, the JOTTO scoring rule defines only six categories (0 through 5 jots). As a result, the maximum possible information gain is only about 2.6 bits in JOTTO, compared with almost 8 bits in Wordle.

Beeler also recognized a limitation of this “greedy” strategy. “It is conceivable that the test word with the highest expectation at the current point in the game has a good chance of getting us to a point where we will NOT have any particularly good test words available . . . I am indebted to Bill Gosper for pointing out this possibility; the computation required, however, is impractical, and besides, the program seems to do acceptably as is.”

The JOTTO program was written in the assembly language of the PDP-6 and PDP-10 family of machines from the Digital Equipment Corporation, which were much loved in that era at MIT. (Beeler praises the instruction set as “very symmetrical, complete, powerful and easy to think in.”) But however elegant the architecture, physical resources were cramped, with a maximum memory capacity of about one megabyte. Nevertheless, Beeler found room for the program itself, for a dictionary of about 7,000 words, and for tables of precomputed responses to the first two or three guesses.

Humbling.