The Keys to the Keydom

by Brian Hayes

Published 27 October 2013

Your security and privacy on the Internet (to the extent that such quaint notions still have meaning) depend on the difficulty of factoring certain large numbers. For example, take this 1,024-bit integer:

X = 123784517654557044204572030015647643260197571566202790882488143432336664289530131607571273603815008562006802500078945576463726847087638846268210782306492856139635102768022084368721012277184508607370805021154629821395702654988748083875440199841915223400907734192655713097895866822706517949507993107455319103401

The number printed above is the product of two 512-bit prime factors. If you set out to find those factors, the project might well keep you busy for many megayears. But I can make the job much easier by giving you a second number of the same size:

Y = 139752806258570179719657334941265463008205415047073349942370461270597321020717639292879992151626413610247750429267916230424010955054750502833517070395986289724237112410816000558148623785411568845517146303421384063525091824898318226175234193815950597041627518140906384889218054867887058429444934835873139133193

Factoring both X and Y would appear to be twice as much work, but in fact you can do it lickety-split. On my laptop it took roughly 200 microseconds. From millions of years to millionths of a second—that’s quite a speedup!

There’s a trick, of course. Both X and Y are products of two large primes, but it so happens that one of the primes is a shared factor of both numbers. For finding that shared factor, we can rely on a very old, very famous, very simple and very efficient algorithm: Euclid’s algorithm for the greatest common divisor. In Python it looks like this:

def gcd(a, b):

if b == 0:

return a

else:

return gcd(b, a % b)

(The ‘%’ in the last line is Python’s modulo or remainder operator.) When this function is applied to X and Y, the recursion is invoked 297 times before returning the common factor:

F = 10704679319376067064256301459487150226969621912489596482628509800922080318199635726117009340189103336170841315900354200725312700639146605265442630619090531

You don’t have to take my word for it that F divides both X and Y. Do the division: In that way you will also learn the co-factors of X and Y.

If X and Y were components of public keys in the RSA cryptosystem, their shared factor would create a huge hole in the security fence. And the problem is particularly insidious in that each of the two keys, when examined in isolation, looks perfectly sound; the weakness only becomes apparent when you have both members of the pair.

This potential vulnerability of factoring-based encryption methods has been known for decades, but it seemed there was no reason to worry because coincidentally shared factors are so utterly unlikely. A couple of weeks ago I heard an eye-opening talk by Nadia Heninger, a member of a group that has searched for such unlikely coincidences in the wild. They found 64,000 of them. Reason to worry.

Heninger and her colleagues polled every public IPv4 address in the known universe, requesting a connection on the ports commonly used for two secure communication protocols, TLS and SSH. For every address that responded to queries on those ports, they collected the server’s public encryption key, then closed the connection. Here I am going to discuss only the TLS servers with RSA keys; there were vulnerabilities in other cryptosystems as well, but the issues are slightly different.

Before telling the rest of this story, I have to pause here. For those of you in the born-digital generation, pinging every address on the Internet may sound like a routine walk around the block on a sunny afternoon, but I confess that I never would have dared to try anything so audacious. It’s like knocking on every door in America, or calling every possible telephone number—a task that’s not feasible for individuals of ordinary means, and that also seems unforgiveably rude. But standards of feasibility and rudeness are different in the world of machine-to-machine communication. Computers don’t care if you make four billion hangup calls (although some system administrators might frown on the practice). And, after all, the encryption keys being collected are by definition public.

Back to Heninger’s story. They ran their scan of IP addresses from Amazon’s Elastic Compute Cloud service, where the data-collection phase of the project took a few days. Out of \(2^{32} \approx 4\) billion addresses (less a few special-purpose or reserved areas) they found about 29 million servers accepting connections on the standard port for TLS, but only 12.8 million of those servers supplied public keys. Some 60 percent of the keys retrieved were not unique. Presumably, most of the duplicates are accounted for by organizations that have multiple servers all operating with the same cryptographic credentials, but there were also instances of apparently unaffiliated individuals sharing a key. This is rather like discovering that your house key also opens your neighbor’s front door. (And vice versa.)

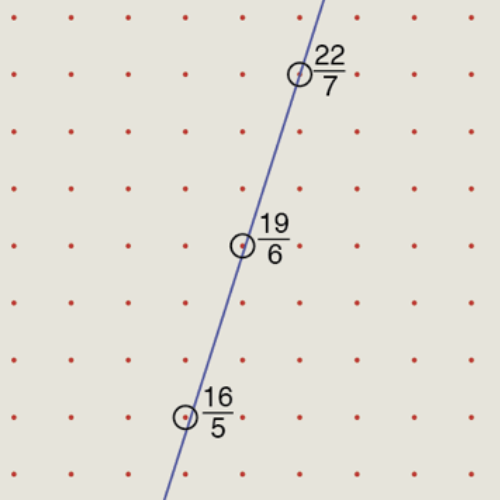

After eliminating the duplicates, some 5.8 million distinct RSA keys needed to be tested for common factors. Even though Euclid’s GCD algorithm is highly efficient, running it on all possible pairings of keys would be a strain. There’s an ingenious shortcut, based on the observation that if \(Y\) is relatively prime to each of \(X_1, X_2, \ldots, X_n\), then it also has no factor in common with the product \(X_1 \times X_2 \times \dots \times X_n\). Thus it’s possible to detect the presence of shared factors with just \(n\) GCD operations, instead of \(n^2\). A drawback of this approach is that the product of millions of RSA keys is a huge number, and intermediate results have to be swapped out to disk. Nevertheless, the processing was completed in an hour and a half on the Amazon cloud at a cost of $5.

The output was a list of 64,081 compromised keys for TLS hosts, about 0.5 percent of all such keys collected. For obvious reasons, Heninger et al. are not publishing that list; they tried to contact the owners of vulnerable machines, and they offer a web lookup service where you can check to see if your key is on the list.

The good news is that none of the weak keys are guarding access to major web servers hosting bank accounts or medical records or stock markets or military installations. Most of them are found in embedded networked devices, such as routers and firewalls. That’s also the bad news. A programmer with malicious intent who can gain control of a well-placed router can make a lot of mischief.

Could the prevalence of common factors in RSA keys be explained as a product of pure bad luck? To answer this question we need to solve a birthday problem. The original version of this problem asks how many people you need to bring together before there’s a good chance that two or more of them will have the same birthday (assuming birthdays are distributed randomly over the 365 days of the year). An order-of-magnitude approximation is \(\sqrt{365}\), or about 19. (The actual number is 23.) For the RSA variant of the problem, we ask how many 512-bit primes you need to generate—assuming you select them uniformly at random from the set of all such primes—before you have a good chance of seeing at least one prime twice. In this case we replace 365 with the number of 512-bit primes, which is in the neighborhood of \(10^{150}\). Thus there’s scarcely any chance of a collision until the number of randomly generated primes approaches \(10^{75}\). We’re only at \(10^{7}\) so far. As Heninger said in her talk, we have enough 512-bit primes to assign a public encryption key to every atom in the universe, with little worry over possible duplicates.

According to this line of reasoning, it would be a colossal fluke to see even one duplicated RSA prime, and finding 64,000 of them is clear evidence that those primes are not being chosen uniformly at random. The blame apparently lies with pseudorandom number generators. It’s not that the algorithms are defective. In many cases, cryptographic keys are being generated immediately after a machine is booted, when it just can’t scrape together enough entropy to make a passable pseudorandom number.

Sources:

Heninger gave her talk at a birthday-party for Microsoft Research New England on October 9. Eventually, video may be available.

The paper describing the project is “Mining Your Ps and Qs: Detection of Widespread Weak Keys in Network Devices,” by Nadia Heninger, Zakir Durumeric, Eric Wustrow, and J. Alex Halderman, presented at the 2012 USENIX Security Symposium. Preprint.

At the Microsoft symposium Heninger also discussed at later study of other cryptographic weaknesses in certain smart cards. See “Factoring RSA keys from certified smart cards: Coppersmith in the wild,” by Daniel J. Bernstein, Yun-An Chang, Chen-Mou Cheng, Li-Ping Chou, Nadia Heninger, Tanja Lange, and Nicko van Someren. Preprint.

Arjen Lenstra and his colleagues have independently discovered and reported similar vulnerabilities. See “Ron was wrong, Whit is right,” by Arjen K. Lenstra, James P. Hughes, Maxime Augier, Joppe W. Bos, Thorsten Kleinjung, and Christophe Wachter. Preprint.

Open-source software developed by Zakir Durumeric, Eric Wustrow, and J. Alex Halderman at the University of Michigan for scanning large blocks of the public IP address space: ZMap. A descriptive paper from the 2013 USENIX Security Symposium.

The Michigan group’s web service for checking public keys against the collection of known factorable keys: factorable.net.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 27 October 2013

Converted to Eleventy framework: 22 April 2025

And don’t forget bugs:

Debian is widely deployed. It is not clear how many keys generated between 2006 and 2008 were re-generated after Debian patched this hole.

Heninger et al. did check their data for “Debian weak keys.” They found 4,147 such TLS keys and 53,141 SSH keys.

I can explain a huge amount of these duplicate primes: Hardware that lacks an RTC w/battery.

There’s millions of embedded systems (routers, switches, PLCs, etc) that do not have proper clocks inside them. So when they lose power all devices with the exact same firmware will have the exact same entropy pool. If these systems run web servers (for configuration and monitoring purposes) they will (usually) automatically generate a new self signed certificate. When that happens there is a good chance they will get the same primes.

I’m actually surprised that the number is so low. Apparently the Linux kernel is doing a decent job at randomization despite the lack of a RTC and identical hardware/software.

And how are the primes from the RNG selected? Just by using 2^n-1 or something? Then there are far less primes to test for.