Shut Up and Calculate!

by Brian Hayes

Published 12 August 2008

In my latest American Scientist column I advert to a famous passage in Leibniz (translation by Robert Latta, 1898):

When controversies arise, there will be no more necessity of disputation between two philosophers than between two accountants. Nothing will be needed but that they should take pen in hand, sit down with their counting tables and (having summoned a friend, if they like) say to one another: Let us calculate.

That final exhortation, “Let us calculate,” is a single word in Leibniz’s Latin text, lending me a title for my column: Calculemus! I might have expressed the same thought more forcefully, quoting somebody or other, as: “Shut up and calculate!”

The column is a manifesto in support of computer programming as a tool for exploring, experimenting and problem-solving—what I like to call inquisitive computing. This is an activity that shouldn’t need defending or promoting, and yet I feel it is becoming a neglected art. These days, computer programming is often viewed mainly as an aspect of software development—but that’s not the kind of programming I have in mind. In software development, the program is the product; it’s what you hand over to the end user. Inquisitive computing is different: The program is just a device for getting an answer. The ultimate goal is not the program itself but the result of running the program. Indeed, once the answer is in hand, the program is often of no further use or interest and can be thrown away.

My gripe is that tools for inquisitive computing are not getting as much attention as they once did. The world of software development offers luxurious, richly appointed programming environments, systems such as Xcode on the Macintosh, or the Eclipse editor favored by many Java programmers. But these systems are not well-adapted to the needs of inquisitive computing, where the emphasis is on low overhead and incremental, trial-and-error methods.

Am I alone in feeling aggrieved about this situation? I’d be interested in knowing what others think. Do you do the kind of programming that I’m describing as inquisitive computing? What tools do you use? Are you happy with them? (Let me hasten to add that I don’t see this as another food fight over the virtues of various programming languages. I’d welcome well-made environments for inquisitive computing based on any language whatever.)

In any case, I don’t want to sound too grumpy about this. The new column is also supposed to be an anniversary celebration: I’ve been writing these essays for 25 years. To mark the occasion I am trying to make some of my earlier work available online. There are no machine-readable copies of the early columns, so my only recourse is to run paper pages through the scanner. The result is a bloated PDF of marginal quality; sorry, it’s the best I can manage. So far I have scanned about a dozen of the columns I wrote for Scientific American and Computer Language in the 80s and for The Sciences in the 90s. I’ll be adding more over the next few weeks. There are links in my publications list.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 12 August 2008

Converted to Eleventy framework: 22 April 2025

I use Sage for that sort of work. With Python as it’s base it integrates many of the most useful and highly regarded open source mathematics software into itself in a consistent way. It also allows symbolic calculation. You can run it both interactively and as part of the command line too. Awesome!

Brian, you’ve hit upon a very interesting topic for today. At the company where I work we do both kinds of computing. We use MATLAB for inquisitive computing where one’s goal is to test an algorithm or explore behavior. And, we use C++ when we develop code to deliver to a customer.

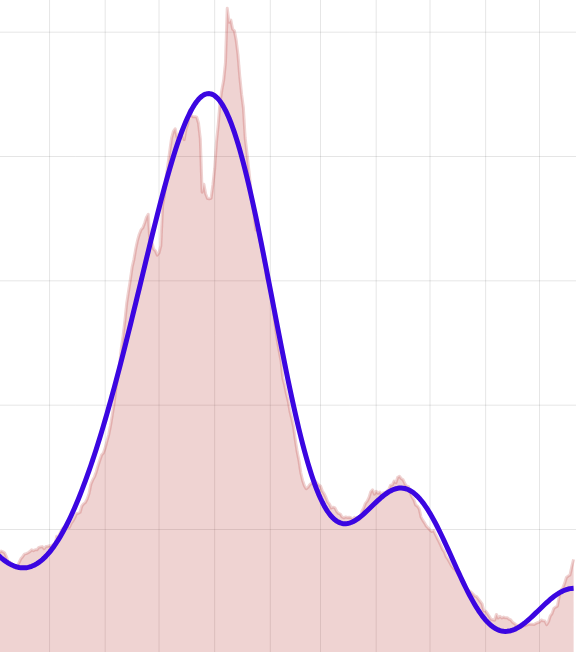

We have a project now that involves a Kalman filter to track targets. My engineer and I developed the routine from a standard textbook, and tuned it in MATLAB. But in the deliverable, he (the engineer) wrote the routine in C++ and integrated in to our GUI and display code. That way, we deliver a single executable.

I think fundamental languages like C++ or too “primitive” for inquisitive computing and one really needs a more comprehensive package such as MATLAB or Mathematica, maybe Octave which is open source. However, if your inquisitive computing leads to a product, or code to be integrated in a product, well then you have to deliver releasable code so that an executable is best, say from C++.

(I don’t believe, and maybe I’m wrong, that one can deliver a program, if that’s the goal, in a language that requires a particular installed software platform to run, although I think JAVA maybe an exception.)

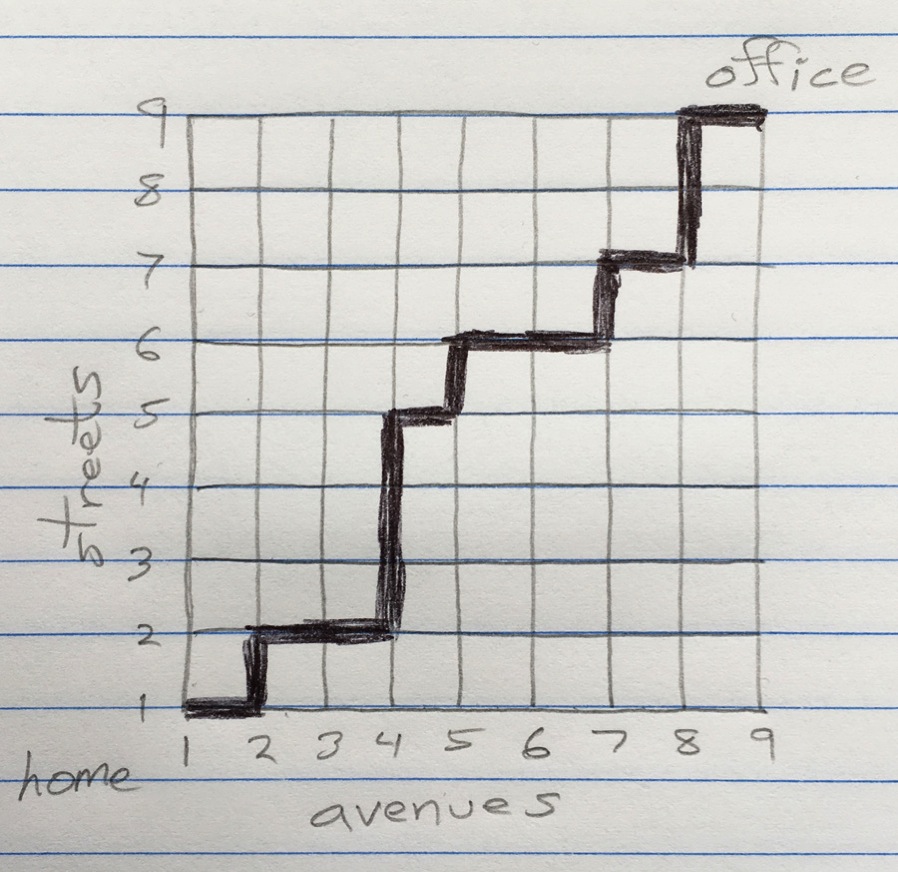

At home, for what it’s worth, I do my experimentations (I’m interested in billiards) with MATLAB because I can program routines easily, debug them quickly (that’s hard in, say C or even BASIC), and get answers and see behaviors right away. Plus, plotting data is simple with MATLAB and I can easily save the plots for papers and reference.

I hope that helps in some small way.

I find it very refreshing to hear about programming as something other than software development!

I use Haskell for exploratory computations, but it rarely turns out that “The ultimate goal is not the program itself but the result of running the program.” I almost always get wrapped up in the structure of the relevant computations, so that the program itself becomes a document explaining the problem.

I also use Haskell for this type of thing, with a high degree of satisfaction. I find it has most of the niceties you mentioned in your column: sufficiently high-level to be good for maths, extensive libraries, an active user base and excellent documentation, and I find vim + ghc to be a satisfactory working environment.

I use R (see http://www.r-project.org/) for many things, not just statistics. It is almost infinitely extensible, and if there’s some function or algorithm you need, chances are someone has written an R package to do it. It has powerful graphical capabilities, and as these are also extensible, there are packages available to generate nearly any type of graph or figure. There is an active user community, and extensive documentation available.

The main drawback is that R has something of a steep learning curve. Also, to be most efficient, code must be “vectorized”, something that is not always easy to achieve and makes the resulting programs difficult for others to read and understand.

I am researcher and lecturer at the Johannes Kepler University in Linz and I like it a lot to play around with math and physics and to visualize results (see e.g. http://www.bru.hlphys.jku.at ). Let me tell here my personal view of the inquisitive computing problem.

In the earlier time of PC computing it was very simple to calculate and program something with quick basic. Well it is low level programming according to current standards, but it was simple and most PC users could do it. At that time PC were more used by active programmers and mathematicians as it needed anyway some knowledge to set up and use a PC.

Things changed. PC’s got simpler to use (well, this could be discussed, but let it stay for now) and were taken as type writers and calculation machines. So programs were needed where people can do something without knowing what’s going on behind - and this is the big market where companies can earn money. The standard of software is made in such a way that one enters words and numbers and clicks on buttons to get some output. No programming is needed any more.

This goes so far that even students in maths and physics have problems to perform active inquisitive computing. I recognized that these students had problems to solve exercises where they were asked to write a small program for summing up terms in a physical calculation and to present the result as a graph. One student could do it with C, another with mathematica, but most could not! The students do not know of a program like quick basic now a day. I have looked around a little bit, but I could not give a really satisfying answer so far.

I personally use Matlab since about 20 years! One can do easy calculations and also creation of graphics is very simple. At the same time it is very powerful and also larger projects can be realized and the numerical capabilities are very powerful. - Yet, I get more and more disappointed with Matlab and I am just starting to switch to Python.

What are the drawbacks of Matlab? It is quite a specialized pack of software and when I like to distribute and share my work with others (students, visitors of my web site) this does not work, because very few persons have a quite expensive license for it. Even for me it is getting too expensive to have a personal version of Matlab at home. Typically people at university have a license, but not the crowd on this planet.

Matlab has also the possibility to compile a program and to give that away. But the compiler has to be bought separately and in addition the compiled programs run only with a runtime library which has a size of about 150MB. The 150MB is not a problem if you distribute your software on a CD, but if you like to put it onto the web, not many people would like to download that in addition.

Because of these reasons I am going to move away from Matlab. One of my students has tested several alternatives and it seems that for us Python is the best alternative.

Python is free ware, open license - so cost is not a problem. It is a modern, object oriented language very clearly structured. It is an interpreter language (via intermediate byte code). There are many libraries available, also well developed C and Fortran libraries can be called quite simply.

The drawback with an interpreter language is that it is not possible to give away a small exe-file. There is a possibility in Python that the interpreter together with the program is packed into an exe-file which than can be use in such a simple way, but I still have to test whether that really works satisfactorily.

I very much agree with what you have written here and in American Scientist. I frequently do the sort of programming you describe and agree that a comfortable tool is missing.

I have been using R for several years and find its rich set of data structures, functions, and data-visualisation tools useful. While reading your AS column I was thinking “He should take a look at R” and then saw that you probably already had. John S. (above) may be right in saying that R has a steep learning curve but I think this is, in part, due to a lack of step-by-step introductory texts that do not assume a great deal of prior statistical or mathematical training. Some rather doctrinaire design decisions have been made (e.g. the need to use regular expressions in file operation functions such as dir() rather than the simple ‘*’ and ‘?’ placeholders you would use in a shell). The user-interface needs some work (this is, I believe, in hand and the OS-X interface is a great improvement over the dumb terminal available under Linux). Error messages remain obscure. As for the need for vectorised code - as with other languages (MATLAB springs to mind) you can choose to vectorise code or use standard programming structures for looping. The interactive nature of R means that you can play with vectorising until you “get it”. R is open source, has a dedicated team behind it and the mailing lists are excellent although, as is the case with much user-supported software, some posters can be aggressive towards new users.

Having a good tool like R does not stop my missing a smaller and simpler environment. I sometimes wish for a sort of arithmetic assembly language such as ran on calculators such as the old HP-15C … with a clever user-interface you’d get a language that made it impossible to make syntax errors but powerful enough to do some real work. An interpreted Haskel like Hugs inside a Quick Basic like IDE might do the trick.

Great term: inquisitive computing. I’ll probably get laughed at for this, but I’m old enough not to care; for my inquisitive computing needs, I use a TI Voyage 200 graphing calculator. It runs on 4 AAA batteries, is small enough to carry around when the inspiration stirkes and includes a fairly comprehensive programming language called TIBasic. But TIBasic has no line numbers, supports recursion, lists, 2D matrices, floating-point range of 1E±999, complex numbers and does exact rational arithmetic with up to about 600 digits. The disadvantages are monochrome display and speed, even though the hardware is based on a 12MHz 68000. The HP50G is in the same class, even faster (50 MHz ARM) and has a lot more functions built in, but I like the larger display and qwerty keyboard of the v200. It is usually very quick to bash together a program to try the ideas in your columns, but for some, of course, the results are glacially slow in coming.

Of course, for my ‘real’ engineering work, I use fast hardware and expensive software that my employer is willing to pay for.

My daughter recently got an Asus EEE, and one of my next projects is to get my own & turn it into a much more capable box than the v200 for inquisitive computing. I’ll be trying out open-source options such as R, Gnuplot, GINAC, etc. I’m hoping to be able to stitch enough of this stuff together to create an environment with the ease of use of the v200, but faster & more capable.

Also: I always enjoy your columns. “Why W?” was a classic and one of my all-time favorites. Thanks! (And yes, I’ve implemented a Lambert’s W function for my v200 …)

I have to enthusiastically second Doug Burkett: I’m a math grad student who’s worked with many languages and software packages over the years, but the TI BASIC on my TI-83, though I barely even use it these days, will always have a special place in my heart.

I’ve actually been reminiscing about that old workhorse ever since I read Peter Pesic’s review of “Falling for Science: Objects in Mind” in the latest American Scientist. The book, edited by Sherry Turkle, is a collection of essays written by MIT students (and a few senior scientists) asked to respond to this question: “Was there an object you met during childhood or adolescence that had an influence on your path into science?” The review is a fascinating read, as is, I’m sure, the book.

My most memorable experience with TI BASIC, and one which without a doubt steered me square on the path to math and science, took place in my first calculus class; we had just learned Euler’s method for numerical integration and I decided to implement it on my TI-83. I started tinkering with TI BASIC back in middle school, but this was my first foray into truly inquisitive computing, and it was nothing less than revelatory. What had been mere symbols on paper suddenly took on an immediate, spectacular reality. It was one of the formative intellectual thrills of my young life.

I guess the point of this comment, besides TI BASIC nostalgia and a plug for that really interesting book review, is to point out that the powerful and accessible computational tools we have today are a boon not only to professional and amateur problem solvers but to educators as well. Inquisitive computing is an incredibly powerful way to spark young people’s interest in math and quantitative subjects in general, and something that I hope will be embraced by educators.

For what you call inquisitive computing, I prefer the enviornment called yorick. It’s an interpreted language for scientific computing supplying not only a huge mathematical tool collection but also an interactive graphics package which I personally find pretty handsome. Despite being an interpreted language, it is rather efficient. From my experience, even as a newcomer one gets pretty quickly up to speed to solve also nontrivial problems.

As yorick seems to live comfortably in all major operating-system worlds (Linux, Mac, Windows) it is quite versatile to please many tastes. To learn more about yorick, visit:

http://yorick.sourceforge.net/index.php

I come from the music world where, in addition to pencil and paper instrumental composition, I work with a software sound synthesis package called SuperCollider (http://supercollider.sourceforge.net//). Turns out it has an adequately expressive object-oriented language inquisitive programming as well. SC is happiest on a Mac, but there are PC and Linux versions available.

Your column is always my first stop in each issue of AS.

Thanks for a great article.

I was pleased to find a connection between your perfect medians problem and some other classical recreational mathematics problems. In Chapter 2 of “Time Travel and Other Mathematical Bewilderments” Martin Gardner discusses numbers that are both square and triangular, just like your perfect medians solution. As he describes, the solution to finding these numbers was first provided by Euler and is related to solutions of a more general set of equations known as Pell equations. Definitely worth re-reading. :)

Like the others who have posted, I spend quite a bit of time doing inquisitive computing, and have been similarly frustrated when it comes to finding the right tool.

I’ve had some fun using Fathom and Tinkerplots “data exploration” software for my recreational computing (both are from Key Curriculum Press http://www.keypress.com/) . The software is intended to allow students to carry out simple data and statistical manipulations, but its rudimentary (but surprisingly powerful) support for formulas and generated data make it useful for the sort of inquisitive programming that you’ve written about. These environments are far from ideal, but the combination of simple programming support and really nice graphs makes them well suited for many explorations. A nice benefit of using these environments is that the result isn’t merely the answer to your calculations, but also a document that presents the results in a way that can be further manipulated and explored.

In a funny coincidence, one of the Fathom explorations that I’ve had my students carry out was to program a simulation of the Monty Hall problem. It’s easy to program and easy to see the results, but its not so easy to convince everyone to believe what they’ve found. :)

Thanks again!

interpreted languages as a whole deliver that quality i believe