Pulling the Goalie

by Brian Hayes

Published 29 November 2007

Don Elgee, a retired teacher of mathematics and computer science from Ottawa, sends the following inquiry:

In hockey, when a team is down by a goal with about one minute to go, the goalie is pulled in favor of another offensive player. This illustrates a key point in the strategy of most games.

The object is not to maximize points for, and minimize points against; rather, it is to win or tie the game. As the game nears its conclusion the winning team plays more conservatively and the losing team more radically. But when—and to what extent—should a team change its style of play?

To clarify the problem I tried to conceive the simplest possible example. It turned out to be much more complex than I expected.

Consider the following simple game: Two players toss a die on alternate turns. The first one to reach 100 is the winner. Players have a choice of two dice—one is normal (6, 5, 4, 3, 2, 1) and the other has faces with 6, 6, 5, 1, 1, 1. When should the losing player switch to the second die?

Is there a mathematical solution? Could you determine this by experimental computer simulation? Does the best strategy depend on the strategy the opponent is likely to choose next?

This simple problem is difficult enough, but the sports situation could better be simulated by adding a third die with 4, 4, 3, 3, 3, 3.

Has this issue been subject to mathematical study? With all the statistics used in professional sport has anyone tried to include this factor? Has it ever been incorporated in computer simulations?

Hockey is not my sport, but these are interesting questions. Inspired by Elgee’s letter, I’ve run a few of the obvious simulations. For the time being, however, I’m not going to say much about the results. What I’ve learned so far does not lead to any simple, pithy rule of thumb that a hockey coach could use to decide when to pull the goalie. Nor do I have a clear grasp of how best to play the dice game. I’m hoping someone else will offer a sharper analysis. Toward that end I have some further notes and observations.

Under the stated rules—alternating turns, with victory going to the first player who reaches 100—the privilege of moving first brings a substantial advantage. In a 100-point game with standard dice, the first player can expect to win about 55 percent of the time. If two players are tied at 99, the first to roll is definitely going to win, regardless of which die is chosen. Because of this bias, we probably need to consider different strategies for the first and second players (like white and black in a chess game). An alternative is to add a further element of randomness to the game: In each round, the player to move is determined by the toss of a fair coin.

Elgee’s two nonstandard dice have faces that sum to 20, less than the standard die’s 21. As a model of hockey tactics, this bias makes sense: It reflects the risk of leaving the goal untended. But if we ignore hockey and just consider the dice game on its own merits, it might be more interesting to explore a symmetrical contest with dice marked 6, 6, 6, 1, 1, 1 and 4, 4, 4, 3, 3, 3. Then we’d be looking at three distributions that have the same mean but different shapes.

Generalizing further, we could allow dice marked with any six non-negative integers that sum to 21. What would happen to the game if we allowed dice such as 100, -16, -16, -16, -16, -15? Or, we could allow the players to choose continuous probability density functions.

It’s easy to imagine circumstances where a “high-risk” die should be advantageous. If the score is 99 to 94, then the trailing player should be willing to accept any trade-off that improves the odds of rolling a 6; even a 6, 6, 0, 0, 0, 0 die is preferable to the standard die. Conversely, if you’re ahead near the end of the game, the “low-risk” die seems favorable. All this suggests there ought to be some fairly simple guidelines for choosing the best die in any situation. But, as Elgee noted, it’s more complicated than you might expect.

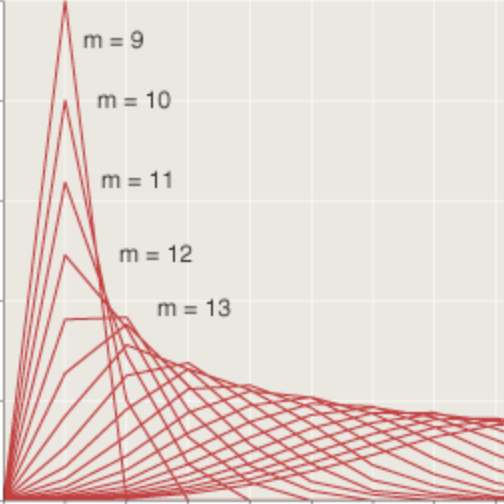

In my simulations I found that with the score tied at 94, switching to the 6, 6, 5, 1, 1, 1 die (while your opponent continues to play the standard die) yields an advantage of 2.9 percent. But when the score is 96 to 96, making the same switch produces a deficit of 3.7 percent. Interestingly, choosing the 4, 4, 3, 3, 3, 3 die brings similar results: a gain at 94 to 94 but a loss at 96 to 96. (The simulations used the coin-flip protocol, but the same kind of anomalies appear under other rules.)

It gets even more complicated. The results cited above are for players who always choose the same die, no matter what the circumstances. But Elgee’s rules allow a player to choose a different die on each roll. Presumably, players who adapt or learn will do better than any player with a fixed strategy. I find that a very simple adaptive strategy—use 6, 6, 5, 1, 1, 1 when behind, 4, 4, 3, 3, 3, 3 when ahead, and 6, 5, 4, 3, 2, 1 when the score is even—gives mostly but uniformly good results in end-game situations.

I echo Elgee’s questions about whether this problem has been analyzed previously. As it happens, I’ve been able to find a bit of literature on the original hockey issue. Tom Benjamin’s NHL Weblog has pointers to three papers published in the 1980s in Interfaces, an operations research journal. In those papers several authors—fans of rival teams—derive a formula for the optimal time to pull the goalie. (They find that coaches almost always wait too long.)

I would have thought that Elgee’s nonstandard dice would also have been investigated before. Some years ago Bradley Efron invented a wonderful set of nontransitive dice, which Martin Gardner wrote about (Scientific American, December 1970, pp. 110–114). Gardner also had a column on various kinds of rigged and trick dice (Scientific American, November 1968, pp. 140–146). A game called Piggy has certain elements reminiscent of Elgee’s game, but those elements don’t include dice that differ in variance.

Perhaps I’ve just failed to find the right search term on Google or MathSciNet, and a reader will supply a reference.

Update: Minutes after posting the above, I discovered an article by B. De Schuymera, H. De Meyera and B. De Baetsb, “Optimal strategies for equal-sum dice games” (Discrete Applied Mathematics 154 (2006) 2565–2576), that covers some of the territory. They analyze a game in which players can choose any die whose n faces have the same sum. But they consider only games in which players bet independently on each throw of the dice. Under these conditions, they show that with six-sided dice summing to 21, the optimal die is the standard one, with faces marked 6, 5, 4, 3, 2, 1. Again, however, if I understand correctly, this result applies only to individual throws of the die.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 29 November 2007

Converted to Eleventy framework: 22 April 2025

Sounds like something that might have been studied by economists or financial analysts as a simple model of risk. A typical economic scenario is the comparison of, say, two mutual funds: one with higher risk and higher average payoff, and one with lower risk but lower average payoff.

As in hockey, it’s not uncommon for people to invest riskily when they’re young and have little to lose, and invest more safely once they’ve retired and have little means of getting their money back if they lose it gambling on stocks (a bit like the winning team in hockey).

And at the opposite pole from retirement savings we have the desperate-gambler problem—the one where you enter a casino with $1,000, and you have to parlay it into $10,000 or your bookie will have you rubbed out. It’s well known that the optimal strategy is bold play.

In relation to the game Piggy, there has been work by Neller and Presser on the simpler game of Pig to determine when you should continue rolling the die on your turn, and the results point to the strategy that you should be more risky as the other player gets closer to winning. Todd W. Neller and Clifton G.M. Presser. Optimal Play of the Dice Game Pig, The UMAP Journal 25(1) (2004), pp. 25-47.

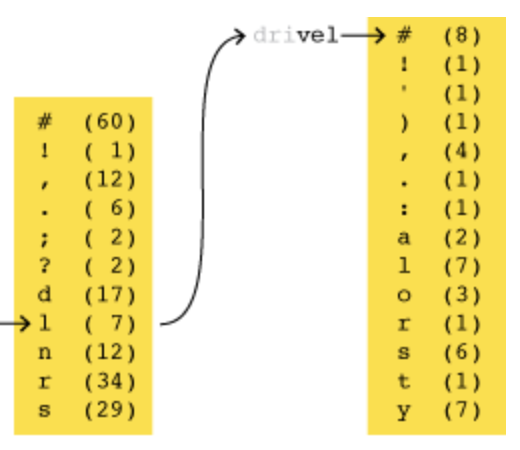

Elgee’s dice game might be better thought of in a take-away sense: Each player starts with a pile of N stones and, taking turns, removes the number equal to the roll of a chosen die, the winner being the first player to empty his pile. In this way one can inductively calculate P(h,k), the probability of winning when you’ve got h stones left, your opponent has k stones, and it’s your turn to roll a die:

P(h,k) = max{1-p1*P(k,h-1)-p2*P(k,h-2)-p3*P(k,h-3)-p4*P(k,h-4)-p5*P(k,h-5)-p6*P(k,h-6)}

where the max is taken over the available choices of (p1,p2,p3,p4,p5,p6), representing the probabilities of rolling 1,2,3,4,5, or 6 with a given die (so that p1+p2+p3+p4+p5+p6=1). Note this is a kind of min-max probability, which assumes your opponent adopts the same P-maximizing strategy: If he doesn’t, your probability of success can only go up.

It shouldn’t be terribly time-consuming to compute the 10,000 P(h,k)’s to reach P(100,100). Obviously one should also keep track of D(h,k), which tells you which die gives the max P from position (h,k).

Barry is right. Dynamic programming is your friend on small problem instances like this.

Two suggestions for altering the model:

1) Players roll simultaneously after secretly choosing a die.

2) The game ends after a predetermined number of rolls.