Wrong Number

by Brian Hayes

Published 25 February 2009

Is it just me, or does it happen to everyone? When I try to correct someone else’s arithmetic, I can count on making an error of my own. For instance: I was reviewing William Goldbloom Bloch’s clever and provocative book The Unimaginable Mathematics of Borges’ Library of Babel. I noted in passing a numerical error, trivial in significance even though it’s enormous in magnitude. Bloch had occasion to introduce the number:

![]()

To give a sense of this number’s size, he mentioned that it has 33 million digits. I pointed out that the true digit count is exponentially larger: 1033,013,740. Now I’ve received a correction to my correction, in a note from Michael Rosenthal:

The author [of the book] stated 33 million digits as the length, but Hayes getting closer states that the length is (10 to the 33,013,730). Isn’t the actual number of digits ((10 to the 33,013,730) + 1)?

Of course Rosenthal is right. There are 10n n-digit decimal numbers, but 10n is not one of them. On the other hand, Rosenthal also gives a double-barrelled demonstration of my point about the perils of correcting other people’s arithmetic: In the course of setting me straight, he makes a typographical slip of his own, twice mistranscribing 33,013,740.

My correspondence with Rosenthal provoked me to take a closer look at where that enormous number came from in the first place. This has led me into some diverting adventures with factorials and logarithms. But it has also put me in the position of proposing another correction to a published number, which means I am in grave danger of making still more mistakes.

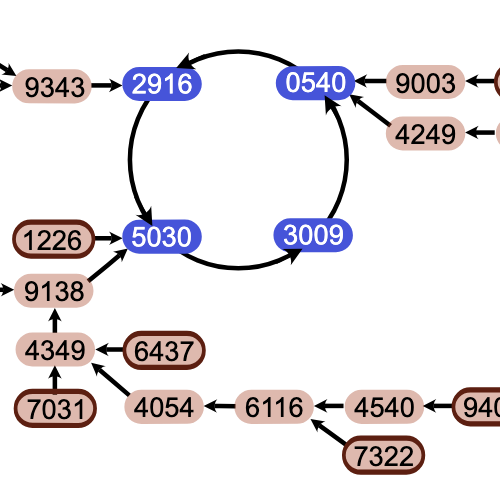

Bloch’s book is a mathematical companion to “The Library of Babel,” a brief ficción by the Argentinian fabulist Jorge Luis Borges. In the Library of Babel all books are written in an alphabet of just 25 symbols. Each book has 410 pages, and each page has 40 lines of 80 characters, for a total of 1,312,000 characters per volume. We are told that the library possesses one copy of every possible volume composed in this way. Thus the number of volumes on the library’s shelves is 251,312,000. For convenience of reference let us call this number B (for Book, or Babel, or Borges, or Bloch).

The much larger number mentioned in my review enters Bloch’s story when he asks how many ways we might arrange the books on the library shelves. The answer, of course, is the factorial of B. If we imagine that the shelves have discrete slots that hold one book each, then we have B choices for the first slot, B–1 choices for the second slot, B–2 for the third, and so on, until we come to the final slot where there’s just one book left to choose. The product of all these numbers is B!.

So what sort of a number is B!? Can we have a look at it? In this age of computational wonders, surely we can just fire up Sage or Mathematica, or write a four-liner in Lisp, and have the digits of that number served to us on a silver platter, no?

No. We can’t.

After three decades of playing with computers, I am still childishly agog at the ease with which I can tap a few keys and calculate a gargantuan quantity such as 52!, the number of permutations of a standard deck of playing cards:

8065817517094387857166063685640376 6975289505440883277824000000000000

I can even calculate 10,000!, unleashing a Niagara of digits that spill out onto my screen in a long gray torrent—some 35,660 of them by the time the flood abates. (Digression: How many of those digits are trailing zeros? This is a question Fred Gruenberger would always ask when he talked about factorials in his old Popular Computing newsletter, back in the days when computing that many digits took a bit of doing.)

Computing has come a long way, but even with so much numerical horsepower at our fingertips, the direct calculation of B! is still totally unthinkable. If Bloch had been right about the 33 million digits, the computation might be feasible, if tedious, but computing a number with 1033,013,740 digits is just ridiculous, with or without the extra digit I forgot.

If we can’t compute B! outright, maybe we can at least get its logarithm. For a rough estimate of the magnitude—in other words, for counting the digits—the realm of logarithms is the right place to be. Since the factorial of n is a product,

![]()

the logarithm of the factorial has to be a sum:

![]()

I don’t know a quick and easy way to perform this summation, but I do know how to evaluate a slightly different sum, one where every term on the right hand side of the equation is log n. Since there are n of these terms, the sum is obviously just n log n. What we have calculated in this way is the logarithm of nn. Thus nn can be taken as a crude approximation—a very sloppy upper bound—to the value of n!. For our particular case we have log BB = B log B. When I plug in the numerical value of B, and use base-10 logarithms, I come up with

![]()

Exponentiating both sides gives this answer:

![]()

Hmm. There’s a problem here. This is supposed to be a loose upper bound, but it’s smaller than the value we were expecting for B!. Clearly, BB can’t be less than B!.

Bloch reports that he came to his estimate of B! via Stirling’s approximation. If you look up this bit of mathematical technology, you’ll find a formula something like this:

![]()

It would be easy to translate the formula directly into computer code, but it’s pointless to do so if the aim is to calculate B!. Again the only practical way to proceed is to take logarithms of both sides. Then the product in the formula becomes a sum of three terms. The first term, log nn, we already know: It’s n log n. The second term, log e–n, is simply –n if we choose to work with natural logarithms; if we want to stick with base-10 logs, it’s –n/log 10. And the third term we don’t need to worry about; at the level of precision we can hope to achieve in these calculations, ½ log 2πn is too small to notice. So, keeping just the first two terms, we have a rough-and-ready approximation to Stirling’s approximation, which ought to work well enough for very large n. B certainly seems to qualify as a large n; when I plug in its value, here’s what I get:

![]()

I have plodded my way slowly and methodically through the steps of this computation in an effort to persuade myself that I might finally have it right. At this point I’m fairly sure that log B! is closer to 101,834,103 than it is to 1033,013,740, but I’m chary of making a stronger claim. With all my crossing back and forth between the safe world of logarithms and the uninhabitable wasteland of exponentials, I worry that I’ve misplaced a factor of e or, worse, mistaken the logarithm of a number for the number itself. If I’ve goofed again somewhere in this calculation, I trust that readers will let me know.

I want to emphasize that my aim in telling this story is certainly not to embarrass Bloch, whose work I admire. His book does a splendid job of explaining ideas in combinatorics, number theory and even topology, in a literary context where such themes don’t get much exposure. His little numerical error—if it is an error—has no impact on the meaning of the passage where it appears.

Indeed, it’s hard to imagine a circumstance where the difference between the two proposed values of B! could have any earthly consequence. Both numbers are always going to be ghostly presences in our universe—actors whose voice we hear from offstage but who never show their face under the lights. A certain breed of radical constructivist would argue that the numbers we’re talking about don’t exist at all. I don’t find that doctrine helpful or persuasive, but I do think there’s a distinction worth making between numbers we can actually hold in our hands and these strange, outsized objects that come equipped with their own exclamation points. Computing with numbers this large is like working with toxic or radioactive materials. You never get to touch anything directly; all manipulations are performed by remote control from behind a blast shield. The experience can be exciting, but afterwards you don’t come away with a sense that you really know what you’re doing.

Fred Gruenberger would have wanted to know how many trailing zeros there are in B!. Can we answer?

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 25 February 2009

Converted to Eleventy framework: 22 April 2025

Fred Gruenberger would have wanted to know how many trailing zeros there are in B!. Can we answer?

Yes.

The number of trailing zeroes in n! is $\lfloor n/5 \rfloor + \lfloor n/5^2 \rfloor + \lfloor n/5^3 \rfloor + \ldots$.

I’m not sure how to calculate this for an arbitrary very large n. But fortunately, B is a power of 5, so each of the terms in the above series is also a power of 5, and you can use the formula for the sum of a geometric series to see that the number of trailing zeroes is (B-1)/(5-1).

This takes only a few seconds to compute; just for fun, I’ve posted the resulting 1,834,097-digit number at http://sage.math.washington.edu/home/cwitty/num_trailing_zeroes_in_B_factorial.txt (I used Sage to do the computation).

Hi.

That’s a nice puzzle indeed, thanks.

Well, skipping a bit of messing with sums, the number of trailing zeros should be:

$$

Z = \sum_{m=1}^{m=\infty} \lfloor \frac {N} {10^m} \rfloor

$$

where $N$ is the number in question ($25^1312000$), and sum is actually a finite one with $\lfloor \log_{10} {N} \rfloor$ non-zero elements.

After that, we can notice that $Z$ is more than $N/10$ and less than $N/9$, and go no further, or try to compute $Z$ explicitly (in some sense of the word).

I wrote a Python script for this (well, Python has a nice long integer arithmetics). It goes like this:

Z = 0

N = 25**1312000

m = 1

N /= 5

while True:

____a = N >> m

____if a == 0:

________break

____Z += a

____m += 1

____N /= 5

Here I take advantage of the fact that $N$ is a power of $5$, therefore all divisions by $5$ are precise.

The script is running. I am quite optimistic about it — I do still believe it’s going to finish in a couple of days or so.

Another problem would be to extract some information about $Z$ later, like exact number of digits or first several most significant bits.

Also:

I wrote this Sage one-liner to compute the number of digits in n!:

sage: def n_digits_in_factorial(k): return (RR(k+1).lngamma() / log(10.0)).ceiling()

I checked that this gives the correct answer for several test cases, ranging from 2 to 100000; so I believe it is correct (although for large n, only the first digits of the result will be correct).

Then I get this result:

sage: RR(n_digits_in_factorial(25^1312000))

3.58756666520195e1834103

which agrees with your computation, so I think your result is correct.

OK, I couldn’t stop thinking about this problem.

I wrote this script: http://sage.math.washington.edu/home/cwitty/factorial_digits.sage

which uses interval arithmetic (based on the MPFI and MPFR libraries) and the lngamma function to compute both the number of digits in the factorial result and some leading digits of the actual factorial. Again, I tested with smaller values, and the results seemed correct. (Sorry the script is undocumented. The first argument to the function is the number of bits to use; the second argument is the number you’re taking the factorial of. If the given precision is insufficient, the function returns None; if it has enough precision, it returns a pair of the number of digits and of the leading digits, scaled to lie between 0.1 and 1.)

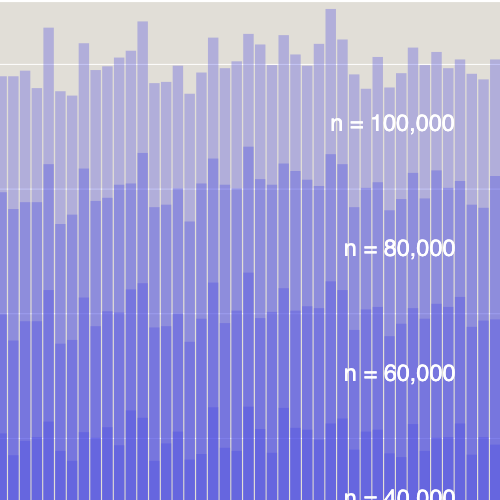

Computing with 1,000,000-bit floating-point numbers was not enough; but using 10-million bit numbers, and a little over 6 minutes of CPU time, I got both the exact number of digits in B! and the first 1,176,194 digits of B!. The number of digits in B! is at http://sage.math.washington.edu/home/cwitty/num_digits_in_B_factorial.txt (this is a 1,834,104-digit number); the first 1,176,195 digits of B! are at http://sage.math.washington.edu/home/cwitty/leading_digits_of_B_factorial.txt (but the question mark after the final 2 means that it might actually be a 1, so I’m not counting that digit as “known”).

Dealing with numbers this large is confusing. When I noticed that the number of digits in the number of trailing zeroes of B! was 1,834,097, and the number of digits in the number of digits of B! was 1,834,104, my first thought was “OK, so almost all the digits are trailing zeroes”. But of course that’s totally wrong… what this actually shows is that almost none of the digits are trailing zeroes.

Talk about wonders of the computer age! All you have to do is post your questions at night, and when you come back early in the morning, all the answers are there waiting for you, like flax spun into gold.

Thank you Carl Witty and Alexander.

Carl, I have to ask: Did you get those results running Sage on your cell phone?

Carl Witty wrote:

Yeah, I agree wholeheartedly. I had the same feeling when I first looked at the difference between approximations to log B^B and log B!. In floating-point notation these numbers have the same exponent and no change in the first six digits of the significand. How can subtracting 25^1,312,000 have so little effect? It took some pondering to accept that 0.000001 x 10^1,184,103 is not a small difference!

Skitt’s law, math version?

btw, ur CAPTCHA==<3

Carl, I have to ask: Did you get those results running Sage on your cell phone?

No; turns out Sage on my cell phone is too slow to be interesting. (The biggest problem is that it doesn’t have enough RAM, so almost everything uses swap space.)

I actually did the big computation on sage.math.washington.edu (24 cores, 128 GB RAM), which was purchased by William Stein using National Science Foundation Grant No. DMS-0821725. But in retrospect, I’m pretty sure I could have run it on my laptop and still finished in reasonable time. (Not my cell phone, though.)

But surely what is more spooky is that almost all natural numbers are larger than the numbers that you describe. And arbitrarily much larger as well. Then, just when you think that you have come to terms with that, and think that you have got a handle on the naturals, you realize that they are just peanuts to the reals…

I decided to try to compute some more digits of B!. At this point, computing leading digits is “easy” if you have enough computing power, so I decided to go back to the trailing digits. It’s totally trivial to compute trillions of trailing digits, but the’re all ’0′, so that’s kind of boring; so I decided to look at the trailing digits just before the zeroes.

Using the Sage script at http://sage.math.washington.edu/home/cwitty/factorial_trailing.py, I have computed that the 11 digits just before the trailing zeroes start are: 01630550016 (and this time the script has comments). It took a little under 3 minutes to check this, with the call decimal_factorial_nz_trailing(2*1312000, 11); each additional digit would multiply the run time by about 5. (The times could be improved a lot by writing in Cython instead of Python, or by managing to prove a simple number theory fact; but the algorithm would still be exponential in the number of digits, so you’d only get a few more digits.)

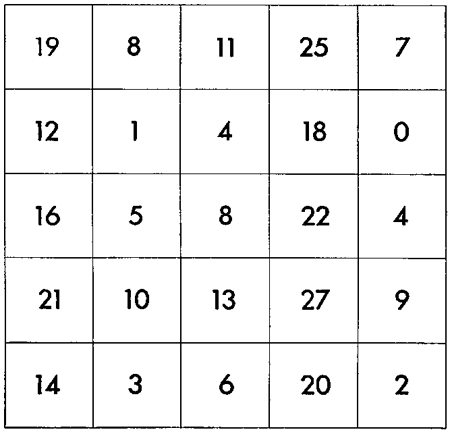

While testing this on small examples, I noticed an interesting pattern; this makes it seem plausible that a much better algorithm might be found that could give a lot more digits. The pattern is that factorial(5^k) shares some trailing non-zero digits with factorial(5^(k+4)).

Here are the last 40 trailing non-zero digits of factorial(5^k) for 1<=k<=11, grouped by k mod 4 (trailing “…” are all the omitted zero digits).

Note that 9 of the 11 trailing non-zero digits of B! occur already in (5^8)!.

If the <pre> tag were supported in comments here, then all the digits would line up nicely above; hopefully it’s still easy to see the common digits anyway.

@Carl Witty:

<pre> does work; I’ve inserted the tags.

<pre> may work for you, but it doesn’t work for me. I already included <pre> / </pre> tags in my comment; if you didn’t see them when you edited the comment, then they must get stripped out before the comment is saved. (Thanks for adding the <pre> tag for me, though!)

I hadn’t realized how privileged I am.

At this point I don’t know where the <pre> tag is being stripped out. More important, I don’t know why. Offhand, I can’t see any particular security risk in letting folks post monospaced content, but what do I know.

My 12-year-old was given as homework: How many trailing 0′s are there in 1005! (See also my blog entry - http://joeganley.com/2008/09/1005.html - in which we derived the same formula as in Carl Witty’s comment above.)