The End of the Number Line

by Brian Hayes

Published 5 February 2008

Very likely you already know how to count, but let’s review anyway. The usual counting sequence for the natural numbers starts 1, 2, 3, 4, 5, … and goes on for quite some time. Some people prefer to start 0, 1, 2, 3, 4, 5, …, but they wind up in the same place at the end. (In general I side with the nullists who count from nothing, but today I find it convenient to join the unitarians: In what follows the least natural number is 1.)

Here’s a computer program for counting out the numbers in their standard sequence:

n = 1 do forever print n n = n + 1

The last line of this program defines a successor function: For every possible value of n, it names the next n. Since the program starts with n=1 and iterates the successor function forever, every natural number appears exactly once in the output.

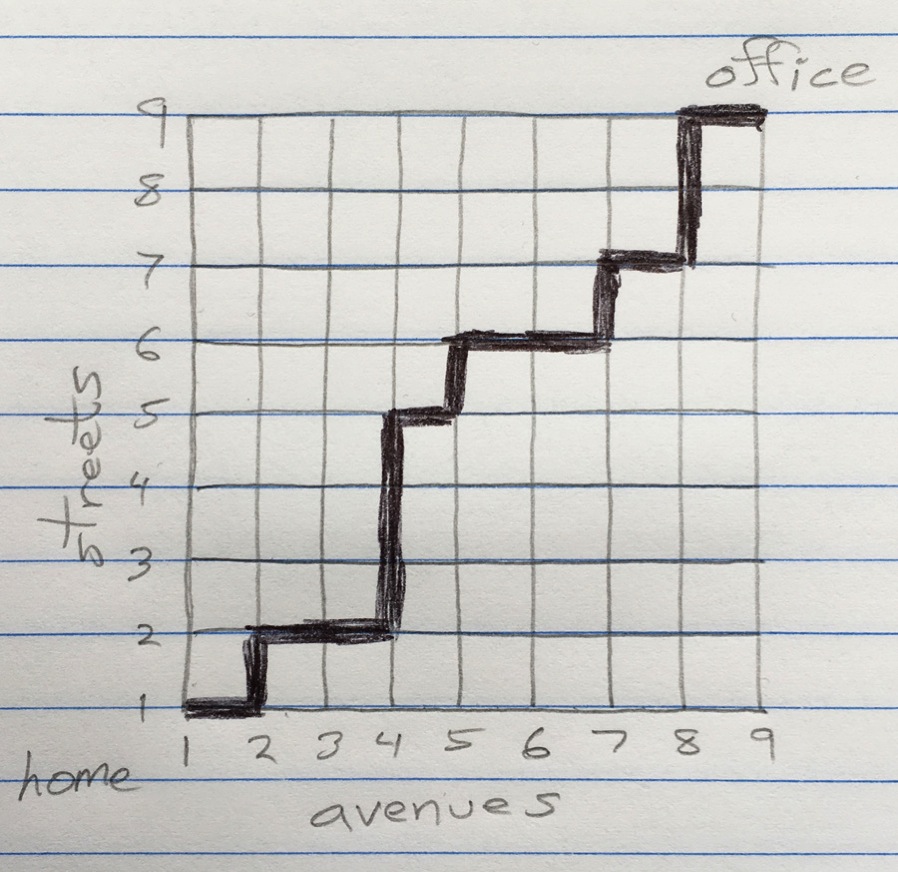

What if we want to arrange the natural numbers in some other order, perhaps starting with a value other than 1? For example, we might want to count 2, 1, 4, 3, 6, 5, 8, 7, …. Altering the program to generate this sequence requires only minor adjustments:

n = 2

do forever

print n

if even(n)

then n = n – 1

else n = n + 3

How about listing all the odd numbers first, followed by all the even numbers: 1, 3, 5, …, 2, 4, 6, …? That seems to be a valid permutation of the natural numbers, but there’s something strange and troubling about the idea of counting this way. When we begin ticking off 1, 3, 5, …, it’s not at all clear how we’re ever going to finish the odd numbers so that we can get started on the even ones. I don’t know how to write a program to generate this complete series. On the other hand, the successor function for such a program is easy enough to describe: It’s just n = n + 2. (Do you doubt this statement? Well, I admit it makes me queasy too. But show me a value of n for which the function fails to produce the correct successor.)

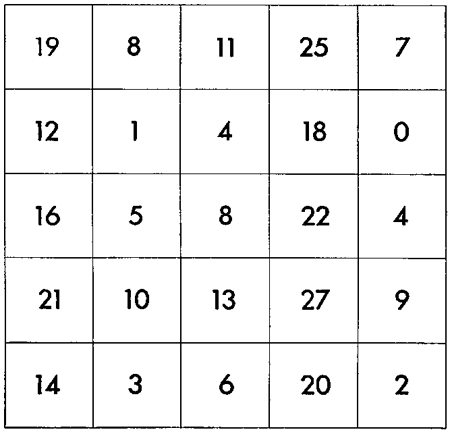

Here’s another ordering of the natural numbers, which looks even weirder:

3, 5, 7, 9, …, 6, 10, 14, 18, …, 12, 20, 28, 36, …, …, 8, 4, 2, 1

There’s a pattern in this series, which emerges when you poke at it a little. For the moment, ignore the final “…, 8, 4, 2, 1″ segment. We start out with the number 3 and then list all the odd numbers greater than 3 in order of increasing magnitude; when this first part of the sequence is finished, we take each of its elements in turn and double it, concatenating the results to form the next segment of the sequence; then in the third phase we take the same odd elements again and multiply each of them by four; the process continues in the obvious way. Thus the successive segments have the following structure:

3×20, 5×20, 7×20, 9×20, …

3×21, 5×21, 7×21, 9×21, …

3×22, 5×22, 7×22, 9×22, …

3×23, 5×23, 7×23, 9×23, …

The only natural numbers missing from this infinite sequence of infinite sequences are the powers of 2 (including 20=1). Those missing values make up the final segment of the sequence. The really weird part is that the powers of 2 are listed in reverse order, from largest to smallest: …, 24, 23, 22, 21, 20. Because of this inversion, we have an inside-out sequence, solidly anchored at both ends but all puffed up with infinities throughout the middle.

What inspires me to write about this curious counting sequence is an article in the latest issue of The American Mathematical Monthly, which landed in my mailbox over the weekend. The article, titled “On ordering the natural numbers, or the Sharkovski theorem,” is by Krzysztof Ciesielski and Zdzisław Pogoda of the Jagiellonian University in Krakow. They explain that the sequence was invented (discovered?) in 1964 by the Ukrainian mathematician Alexandr Nicolaevich Sharkovski. It arose in the context of dynamical systems and chaos theory. I’m not going to get into those topics, but I highly recommend reading Ciesielski and Pogoda’s article. What I want to talk about here is the process of counting in this strange order.

I certainly can’t exhibit a complete counting program for the Sharkovski sequence—a program that would begin with 3 and end with 1 and in between visit all the other natural numbers. After some thought and a bit of trial and error, however, I realized that I can write a successor function for the sequence. It’s a surprisingly sweet little function, concise and simple, which suggests that maybe the sequence is not quite as weird as it looks.

My first instinct in the search for a successor function was to do a case analysis, in effect supplying a separate function for each segment of the series. Each of the segments (except the last one, which I shall again ignore for the nonce) consists of numbers in a certain congruence class: 3, 5, 7, 9 are all of the form 2x+1 (where x ranges over the natural numbers); 6, 10, 14, 18 have the form 4x+2; 12, 20, 28, 36 follow the pattern 8x+4, and so forth. Thus the successor function would be a long series of clauses, one for each possible congruence class. The problem, of course, is that I’d need infinitely many clauses.

There’s a better way. For the first segment of the series—the part made up of odd numbers 3 or greater—the successor function is simple and obvious: n = n + 2. All the numbers of the second segment (3×2, 5×2, 7×2, 9×2) can be reduced to the corresponding odd numbers of the first segment; we just need to divide each of them by 2. For any number in the second segment we can find the correct successor by applying a series of three operations: divide by 2, add 2, multiply by 2. The formula is 2 × (2 + (n/2)). The later segments can be treated similarly, repeatedly dividing n by 2 until we reach an odd number, then adding 2, and finally multiplying by 2 as many times as we divided. This suggests a recursive formulation of the successor function:

function s(n)

if odd(n)

then return n + 2

else return 2 * s(n/2)

That’s all there is to it, and it actually works most of the time! Specifically, it works for all values of n except powers of 2. Consider what happens for n=20. The predicate odd(20) fails, and so we take the else branch of the if statement. There we evaluate the expression 2 * s(20/2), which leads to a recursive invocation of s(10). Again the predicate odd(10) fails, and so we evaluate 2 * s(10/2). This time the recursive call is s(5); the predicate odd(5) succeeds, and so the then clause is selected, returning a value of 5+2=7. Now the two pending multiplications are completed, producing a final value of 2×2×7=28, which is indeed the correct successor of 20. Nothing to it!

I was surprised that so much of the structure of the Sharkovski sequence could be captured in just a few lines of code. But we still have to deal with the final segment of the series, the descending powers of 2—…, 24, 23, 22, 21, 20. Can it be done without making too much of a mess?

Suppose we had on hand a predicate function twopower(n), which returns a value of true if n has no prime factors other than 2, and returns false otherwise. Then we could add an extra if clause to the successor function:

function s(n)

if twopower(n)

then return n/2

elseif odd(n)

then return n + 2

else return 2 * s(n/2)

This function now gives the correct Sharkovski successor for every natural number with one exception. It works as before for odd numbers and for even numbers that are not powers of 2; and, given a power of 2, it returns the next lower power of 2. For example, if the argument n is equal to 4, s(n) returns a result of 2; for n=2, s(n) = 1. The remaining problem case is n=1. I’ll return to that issue below; in the meantime, let’s see how the auxiliary function twopower(n) might be implemented. Here’s how I would do it:

function twopower(n)

if n==1

then return true

elseif odd(n)

then return false

else return twopower(n/2)

The logic here begins with the easy cases: If n is equal to 1 then it definitely is a power of 2, and if n is an odd number other than 1 then it definitely is not a power of 2. If neither of these descriptions apply, then n must be an even number. So we divide it by 2 and start over. The rules of the recursion ensure that any power of 2 will eventually be reduced to 1 (so that the function returns true) and any other natural number will eventually expose an odd factor other than 1 (so that the function returns false).

There’s something curious about this procedure. In its structure and its action the twopower predicate is remarkably similar to the successor function itself. Both procedures involve a recursion on n/2, which terminates only when all the factors of 2 have been divided out. Indeed, every time a successor is calculated, this sequence of divisions is performed twice, first in the twopower predicate and then in the main body of s(n). Surely we ought to be able to avoid this wasteful duplication. Can’t we just do a test at the bottom of the main recursion to find out whether or not we’re dealing with a power of 2?

Yes, we can. And the change to the s(n) program is tiny. We just replace the twopower(n) predicate with the predicate n==1.

function s(n)

if n==1

then return n/2

elseif odd(n)

then return n + 2

else return 2 * s(n/2)

For odd numbers greater than or equal to 3, and for even numbers that are not powers of 2, this procedure again works exactly like the earlier versions. To see how powers of 2 are handled, we can trace the execution of s(4). Neither the n==1 nor the odd(n) predicates are satisfied, and so we fall through to the last line, generating a recursive call s(2). Again the value of n is neither equal to 1 nor odd, so the last line of the definition is activated once more, generating the procedure call s(1). Now the n==1 test is true, and so the value is n/2, or 1/2. Climbing back up through the chain of pending multiplications produces the final value s(4) = 2 * 2 * 1/2 = 2. We get the right answer, and we’ve eliminated the duplicated recursion when testing for powers of two.

Very nice. But there’s still that pesky exception: What happens if you ask the Sharkovski successor function for the successor of 1?

Well, what’s supposed to happen? As the series is presented to us in the Ciesielski and Pogoda article, 1 has no successor. This makes sense in the context where the sequence was originally defined, but if you look at the sequence merely as a counting order, that abrupt end point is very strange. When Giuseppe Peano set out to define the natural numbers, one of the axioms was that every natural number has a successor.

I can think of four ways of dealing with this situation.

Option 1: Ignore it and it will go away. If we start counting through the Sharkovski sequence at n=3, we’re never going to reach n=1 anyway, so why worry about it? But by this argument we could just use n = n + 2 as the successor function, which takes all the fun out of the game.

Option 2: Abide by the rule that 1 has no successor. If the program is asked to provide such a nonexistent result, it halts and prints an error message: “Sorry, but you have fallen off the end of the number line.” This behavior may be the right choice, but I resist it strenuously, on aesthetic grounds. The problem is that the value n=1 can be generated internally, during the recursive processing of a higher power of 2, or it can be presented from the outside, as the initial argument to s(n). These two cases have to be treated differently. It’s entirely possible to do that, but the resulting program is ugly and artificial.

Option 3: Accept what the program tells us, and run with it. In other words, don’t adapt the code to the mathematics; bend the math to suit the code. According to the last version of s(n) given above, what is the next Sharkovski number after 1? Why it’s 1/2 of course! I’ll concede that 1/2 is a fairly strange-looking natural number, but maybe the idea is not totally indefensible. We got to this point by counting down …, 24, 23, 22, 21, 20. It’s not so bizarre to suggest continuing with 2–1. Indeed, we could take a step further. Changing the expression n==1 to n<=1 will ensure that 1/2 also has a successor, namely 1/4, which is followed by 1/8, etc. Now the sequence is infinite at the top, which makes me feel less claustrophobic. (Note that this decision does not change the domain of the function to cover all the rationals. We still can’t calculate the successor of 1/3 or 3/2. We’ve extended the definition of a natural number rather modestly. Previously, natural numbers of the form 2k required k to be a nonnegative integer; now it can be any integer.)

Option 4: Let the successor of 1 be 3. This choice has the effect of turning the Sharkovski sequence into a giant cycle (with a lot of infinities in the middle) and acknowledges the unique role of 1 as the only odd number that’s a power of 2. The code is not very different from what we’ve already seen:

function s(n)

if odd(n)

then return n + 2

elseif (n==2)

then return 1

else return 2 * s(n/2)

In this version we don’t need a special case to deal with n=1; it is treated the same as all the other odd numbers. But now we do need special handling for n=2. In this respect the two solutions seem roughly equal by standard measures of kludginess. The cyclic version might deserve extra credit because it never dabbles in nonintegral values of n.

I have a closing thought. What happens if we try to simplify the successor function even further? Starting from either of the two final versions of s(n) given above, and eliminating the special cases, we wind up with this variant:

function s(n)

if odd(n)

then return n + 2

else return 2 * s(n/2)

But that’s exactly where we began! It’s our first approximation to a successor function, derived by ignoring the problem of those annoying descending powers of 2 at the end of the Sharkovski sequence. This version has an appealing symmetry and simplicity. Odd numbers are treated one way, even numbers another. No other distinctions are necessary.

What does this oversimplified function produce? It yields this:

1, 3, 5, 7, …, 2, 6, 10, 14, …, 4, 12, 20, 28, …, 8, 24, 40, 56, …

That’s rather pretty, and indeed seems more orderly—more “sequential”—than the Sharkovsky sequence itself. At the same time, it leads me to wonder whether we’re really dealing with a sequence at all, rather than a collection of disjoint sequences. What do the dots between those segments really mean? How do we know that the segments should be assembled in the given order? There’s nothing in the successor function to favor that arrangement over, say,

4, 12, 20, 28, …, 1, 3, 5, 7, …, 8, 24, 40, 56, …, 2, 6, 10, 14, ….

In the case of the Sharkovski sequence, there’s an extrinsic reason for imposing a total order, having to do with the origin of the sequence in the study of dynamical systems. The elements of the sequence correspond to the fundamental periods of periodic points in such systems. Sharkovski proved that if a dynamical system has a point with period n, then it also has at least one point with period m for every m that comes later than n in the Sharkovski sequence. (In particular, this means that a period-3 point must be accompanied by points of all other periods: deterministic chaos.)

Unfortunately, there’s no such justification if we look upon a sequence as just an arbitrary arrangement of numbers, ordered according to its own internal logic. I’m not sure such a logic exists in this case.

Notes: Neil J. A. Sloane, who is never out of sequence, already has a reference to Ciesielski and Pogoda, but the sequence is indexed under A005408, the odd numbers. Getting the full Sharkovski sequence into Sloane’s index would be as challenging as writing a program to generate it.

The article by Ciesielski and Pogoda is a translation (from the Polish, by Abe Shenitzer) of a chapter from their book, Mathematical Diamonds, published in Warsaw in 1997. I’m curious about the rest of the chapters. Perhaps some enterprising mathematical publisher will bring out the whole book in English.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 5 February 2008

Converted to Eleventy framework: 22 April 2025

To read this article, and note how nicely Brian interlaces the sequences with code, reminds me of Chaitin’s ideas of algorithmic complexity. When we see these various sequences, it is interesting and indeed, a method for classification, to ask: How can we code a sequence and with that coding, say something about the sequence?

I think Brian does a wonderful job of connecting these ideas (and I wish I had more time to expand my thoughts here) and I hope that in future posts, he’ll explore what he’s started here even more.

Sharkovski’s ordering makes the statement of the associated theorem in dynamics very simple. Other orderings may make the statement more complicated but still be acceptable.

It occurs to me it’s also of interest to find a good recursive definition for the “smaller than” function for the Sharkovski ordering — i.e., the function S(a,b) that returns a or b, depending on which one appears first. Here’s a not-so-succinct stab at doing so:

function S(a,b) if a==1 then return b elseif b=1 then return a elseif odd(ab) then return min(a,b) elseif odd(a) then return a elseif odd(b) then return b else return 2 * S(a/2,b/2)Note, the function min(a,b) has its own recursive definition when a and b are positive integers:

function min(a,b) if a==1 then return a elseif b==1 then return b else return 1+min(a-1,b-1)Does anybody know if there is a statement similar to Sharkovski’s theorem but for cellular automata?

I suppose the 4th approach derives from trying to find the element before 3. Tracing the program gives 1.

One difference between “2, 1, 4, 3, 6, 5, 8, 7, …” and “1, 3, 5, …, 2, 4, 6, …” is that “…” means “do forever”. So you need 2 “do forever” statements.

The Sharkovski Theorem is rather pretty because it is simply stated and deep. Stating it in terms of an ordering of N is an “artistic” touch.

The relationship between mathematics and art is often discussed in the sense of how one can use math to produce artistic visual or musical effects. I have been wondering about the internal aesthetics of math; of mathematical statements or proofs that are beautiful; math that is beautiful based on its mathematical content.

The three statements below that I have quoted from your article, indicate that awareness of this kind of beauty is part of your work (and the work of many good mathematicians).

“It’s a surprisingly sweet little function, concise and simple,…”

“It’s entirely possible to do that, but the resulting program is ugly and artificial.”

“That’s rather pretty,…”

As you can see, I am not just saying that the beauty in mathematics arises because mathematicians seek truth, and truth is beauty. But rather there are are some mathematical truths that are beautiful and others that are not. This is probably not a new idea. Can you refer me to writings on this subject? If not, you may want to write a column on it yourself.