Choosing Up Sides

by Brian Hayes

Published 20 February 2007

A while ago I wrote about the playground ritual of choosing teams for a ball game. The simplest algorithm has two captains, A and B, who take turns choosing players until everyone is assigned to one team or the other. Call this the ABAB algorithm. Donald B. Aulenbach suggested a very easy modification that produces more closely matched teams. Aulenbach’s proposal is the ABBA algorithm, where A gets the first pick in the first round but B goes first in the second round, and they continue alternating in successive rounds. (Another way of describing the same process is that A begins with a single turn and thereafter both captains take two turns in a row.)

For a quantitative model of this process, let’s suppose each player’s ability is represented by an integer chosen uniformly at random from the interval 1 through m. We’ll let the total number of players, n, always be even, so that the two teams will be of the same size. After we have divvied up all the players, we sum the strengths of the two teams and calculate the discrepancy—the absolute value of the difference. Stephan Mertens of the the Otto von Guericke Universität in Magdeburg (who put me onto this problem in the first place) has shown that the expected discrepancy for the ABAB algorithm is m/2 × (n–1)/n; thus as n becomes large the discrepancy approaches m/2.

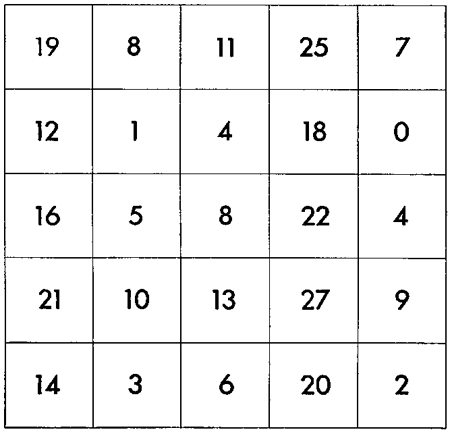

What about the ABBA algorithm? Here are some numerical results:

discrepancy n m ABAB ABBA exact 4 4 1.4 0.7 0.7 6 8 3.4 1.4 0.9 8 16 7.0 2.3 1.2 10 32 14.7 4.2 1.7 12 64 29.2 7.2 2.3 14 128 59.5 13.7 3.6 16 256 122.4 23.9 5.7 18 512 244.6 47.6 8.4 20 1024 490.6 90.6 14.2 22 2048 970.4 168.9 24 4096 1944.9 315.7 26 8192 3972.5 621.5 28 16384 7907.9 1177.9 30 32768 15892.6 2291.4 32 65536 31877.2 4520.0

For each line of this table I ran the ABAB and ABBA algorithms on 1,000 randomly generated sets of numbers with the indicated values of n and m, then averaged the results. Up to n=20 I also found the optimum partitioning of each set, by a brute-force enumeration. The values of m are chosen so that log2m = n/2. Most sets with these values of m and n have many perfect partitions, with discrepancy zero—but of course neither the ABAB nor the ABBA algorithm is guaranteed to find these ideal solutions.

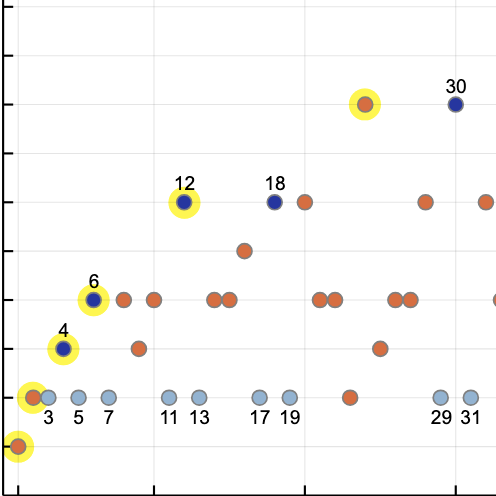

Here are the same data in graphic form:

Clearly, ABBA is superior to ABAB, just as one might expect.

Questions:

- What is the expected discrepancy of the ABBA algorithm, as a function of n and m?

- Is ABBA the optimal algorithm of its type? Of course it is not the best of all algorithms; brute-force enumeration is better, at the cost of exponential computing time. There are also superior heuristics, but they cannot be implemented with a fixed sequence of choices, determined in advance. ABBA is a “blind” algorithm: It applies the same sequence of operations to all sets of data. Is there any other blind algorithm that outperforms it?

- The uniform random distribution of abilities is probably not very realistic; a normal distribution would be more plausible. Would this make any difference in the performance of the algorithms?

- Surely someone has worked on this problem before?

Going back to my own childhood, I don’t think the kids in my neighborhood ever discovered the ABBA algorithm. We did recognize the inherent advantage of choosing first, and we compensated by adopting a separate ritual to decide who got the first pick. In baseball, this involved a hand-over-hand struggle for a grip on the bat. Sometimes I think the preliminaries were more fun than the game.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 20 February 2007

Converted to Eleventy framework: 22 April 2025

The ABBA method is basically a two player version of the “snake draft” customarily used to pick teams in fantasy football leagues. Team drafting order is selected either randomly or based on the previous season’s final standings. After the first round of picks, the last picker picks first in the second round, thus picking twice in a row, and the sequence is reversed. So in a four team league it would go ABCDDCBAABCD….

I’m not clear on the rules of the game here. are the player “qualities” hidden from the choosers, or does A always choose the top ranked player, B chooses the next two, and so on? If not, do A and B pick randomly from all players at each step ?

Suresh: The abilities are known to both captains, and furthermore the captains agree in all their assessments. I assume that the captains always choose the highest-ranked player from those remaining.

I wonder how the following algorithm, which I’ll call the ABA’B’ method, does: Captain A picks first, followed by Captain B. They obviously pick the top two players. Then Captain B makes A’s next choice — picking the *worst* player available — followed by A doing the same for B’s team. Then repeat. In effect this pairs players from the outside in (assuming the number of players n is a multiple of 4 — otherwise the innermost pair go to opposite teams, with the better of those two going to team A).

My guess is, it’ll be virtually indistinguishable, expected-discrepancy-wise, from the ABBA algorithm.

I’m not sure Barry’s algorithm would be as acceptable in a playground situation. Is it more insulting to be picked last or to be nominated as the worst player while there are still many more to be selected :)

An equivalent way to run my algorithm is to alternate picks with A going first and B second for roughly half the rounds, then switching to B first, A second for the rest (thus giving B two consecutive picks midway through). E.g, for n=8, the picks go ABABBABA, while for n=10 they go ABABABBABA.

In general, any “playground algorithm” amounts to assigning to each (even) n, an n-bit binary number (letting A=1, B=0) with equal numbers of 1′s and 0′s. There is, for example, the bully’s algorithm, which gives numbers like 11110000. The expected discrepancy for the bully’s algorithm is obviously going to be quite large!

Let’s define the “value” of a player to be the number of players on the other team that he or she is better than — i.e., the “value” of each 1 is the number of 0′s to its right, and likewise the “value of each 0 is the number of 1′s to its right. The “strength” of a team is the sum of its players’ values. The “bias” of an algorithm is the difference between the two teams’ strengths. It is a function of n. For the bully’s algorithm, the bias is a whopping k^2, where k=n/2. For the classic ABAB algorithm, it’s simply k. And for Brian’s ABBA and my ABA’B’ approach, it’s 0 when k is even and 1 when k is odd. (It’s a nice exercise to show that you cannot get 0 bias when k is odd, no matter what you do.)

My guess is that, at least asymptotically, the expected discrepancy for any playground algorithm is purely a function of its bias divided by k. I’m not sure what I mean by “purely.” I’ll leave that for someone else to figure out….

Hi guys. I’m getting confused by my own blog. What is the “bully’s algorithm”? I had used the term “two bullies algorithm” (or words to that effect) to mean essentially the same thing as the ABAB algorithm. Barry, you have something else in mind? Or maybe I’ve just dropped a stitch. I’ve been a bit out of it, in more than one way — now including being out of town. Because I may not be able to attend to this forum from moment to moment over the next two weeks, I’ve set comments to post automatically, without my intervention. So please carry on without me. (But the spam filter remains in place.)

–Brian

Brian, I missed your use of “two bullies.” My “bully’s algorithm” has just one bully, who picks all the best players, leaving the scrawny kids for the other team — that’s what 11110000 (or AAAABBBB, in your notation) does with 8 kids.

My point, if I have one, is that you should be able to get some sense of how big the expected discrepancy will be by measuring what I call the “strength” of each teams and taking the difference (which I call the “bias”). My “bully’s algorithm” is quadratically biased (it grows with the square of the number of players), the classic ABAB algorithm is linearly biased, and your ABBA and my ABA’B’ algorithms are essentially unbiased (i.e., either 0 or 1).

The following algorithm (call it ABCABC-BABA) uses a third bully in the following way:

Do ABCABC until all the players have been distributed in three groups. Then, take the players in C and distribute them using the BABA algorithm. According to my program this one is better than the ABAB but not as good as the ABBA. May be some kind of ‘buffer’ like this can improve the result but the team with the first choice has still a big advantage.

Seb’s algorithm is equivalent to ABBABA, which works best when n is a multiple of 6, say n=6m. My “strength” analysis says this algorithm produces a bias of m in favor of team B, which suggests the discrepancy should also favor team B (but not as much as team A is favored by the ABAB algorithm, which has bias 3m). So if Seb’s program shows his algorithm still favors team A, then either there’s something amiss in his program, or my “strength” analysis is worthless. The latter possibility seems the more likely….

Barry, I made some new experiments and I think you are right. Team B seems to be favored by the algorithm ABBABA. Thank you. You should continue trusting your “strength” analysis.

Hi there-

Isn’t this the Morse-Thue sequence?

ABBA-BAAB-BAAB-ABBA…

Check out “Fair Division” works by Brams and Taylor. I think they prove some cool things about Morse-Thue and its relation to the playground picking.