Running on Empty

by Brian Hayes

Published 24 November 2006

Driving over the river and through the woods yesterday, I was running low on fuel. My car has two kinds of instruments to tell me that I’ll soon be standing by the side of the road feeling foolish. A conventional gas gauge shows the fraction of a tankful remaining, presumably based on readings from some sort of float mechanism inside the tank. The second instrument measures the rate of fuel flow to the engine, showing the result on a digital display that can be set to any of three modes, labeled “consumption,” “average consumption” and “range.” The two “consumption” modes are calibrated in miles per gallon; the “range” mode gives an estimate of the distance remaining until the tank is empty, in miles. When you’re nervous about whether or not you can make it to the next gas station, the range is clearly of interest.

Driving over the river and through the woods yesterday, I was running low on fuel. My car has two kinds of instruments to tell me that I’ll soon be standing by the side of the road feeling foolish. A conventional gas gauge shows the fraction of a tankful remaining, presumably based on readings from some sort of float mechanism inside the tank. The second instrument measures the rate of fuel flow to the engine, showing the result on a digital display that can be set to any of three modes, labeled “consumption,” “average consumption” and “range.” The two “consumption” modes are calibrated in miles per gallon; the “range” mode gives an estimate of the distance remaining until the tank is empty, in miles. When you’re nervous about whether or not you can make it to the next gas station, the range is clearly of interest.

But keeping an eye on the range estimate is also somewhat disconcerting. If the meter says you can last another 23 miles, and then you drive a mile, it seems reasonable to expect that the meter will report a remaining range of 22 miles. In fact it may well say 19 miles, or 23, or even 26. It’s particularly bizarre to see the range increase as you continue driving.

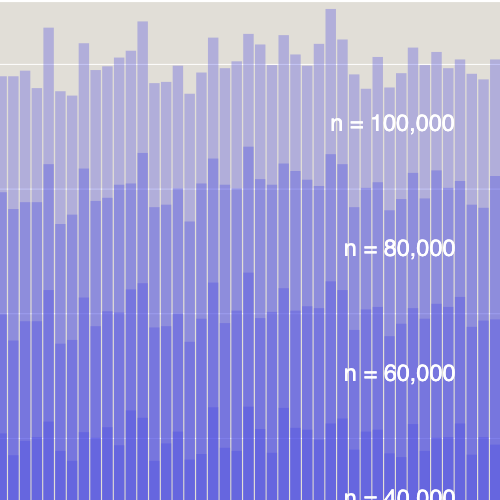

What’s going on here? It’s not hard to guess. The estimated range is simply the number of gallons remaining in the tank multiplied by the fuel-use rate in miles per gallon. Both measurements doubtless have some noise in them, but variations in the fuel flow rate are the major cause of fluctuations in the range estimate. For my car, the instantaneous fuel economy dips down below 10 miles per gallon under hard acceleration, and it appears to go well above 100 miles per gallon when coasting downhill. (The meter tops out at 99.9 mpg.) These variations could alter the range estimate by a factor of 10 or more. Strictly speaking, the fluctuating estimates are not wrong—they indicate the actual range if you were to continue driving exactly as you were at the moment of measurement—but some averaging or filtering would seem sensible.

In fact, I think the range readings I see on my dashboard instrument are smoothed to some extent. The number is updated at intervals of about 30 seconds, and it may reflect an average calculated over a somewhat longer period. The question I want to ask is this: What is the optimum averaging interval—optimal in the sense that it minimizes some measure of error in the estimates? I doubt there can be any definitive answer without making some assumptions about the nature of the fluctuations, but I have a heuristic proposal that seems pretty good to me.

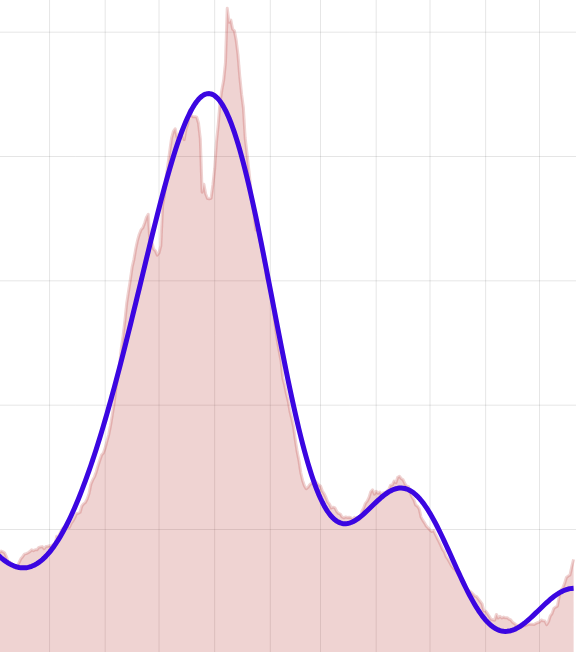

Here’s how I was thinking about the problem during my Thanksgiving pilgrimmage. Suppose you keep driving until the tank runs dry, all the while recording your distance and rate of fuel consumption, moment by moment. Retrospectively, then, it’s easy to determine the number that the range meter should have been displaying at any point during the trip: You just measure the distance backward from the point where the engine died, and by definition that’s the range remaining. But note that for every point along the route, this “retrodicted” range should be equal to the number of gallons left in the tank at that point multiplied by the fuel-use rate (in miles per gallon) averaged over the remainder of the distance. This fact suggests a perfect estimation strategy: You should always average the fuel-use rate over the remaining range. Unfortunately, the remaining range is exactly what you’re trying to calculate, so this algorithm is not very practical. But perhaps we can approximate it.

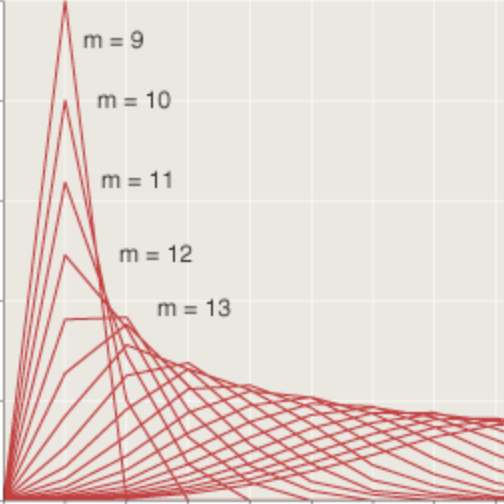

To reiterate: If the remaining range is n miles, then the ideal is to estimate this range by averaging the fuel consumption over the next n miles. We can’t quite do that, for two reasons. First, we don’t know what the average consumption will be in the n miles to come; it will depend on terrain, speed, traffic conditions, and many other imponderables. Second, we don’t even known what n is; that’s what we’re trying to estimate. But don’t despair. To cope with the first problem, we can choose some other interval of n miles as a surrogate for the n miles just ahead; the obvious choice is the n miles just behind us. As for the unknown quantity n, we calculate it iteratively. Make some initial guess r0 about the fuel consumption rate, perhaps using the long-term average since the car was manufactured. Multiply r0 by the gallons in the tank to get a first estimate n0 of the remaining range. Then take the average fuel consumption over the preceding n0 miles and again multiply by the number of gallons to get a better range estimate, n1. Only a few repetitions of this process ought to be needed to converge on a pretty good estimate of n. That estimate, of course, is what the dashboard meter will report.

With this scheme, as the estimated range gets smaller, it will also get more volatile, because the consumption rate will be averaged over a smaller interval. I argue that this tendency to wider fluctuations is not a failure of the algorithm. In the last few miles before the tank runs dry, the range really does depend sensitively on whether you’re descending a hill on the open highway or stopping and starting at a series of city traffic lights.

Something tells me I’m not the first person to think about this problem or the first to propose this solution. The same issues arise in lots of other contexts, such as predicting how long the battery will last in a laptop computer. If anyone has a plan that can beat mine, I’d be pleased to hear about it.

By the way, I arrived on time for Thanksgiving dinner, with a few drops left in the tank.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 24 November 2006

Converted to Eleventy framework: 22 April 2025

I think there’s a simpler way, at least in principle. All you need to do is keep track of the function O(g), which is the Odometer reading when the car has burned a total of g gallons of gas. (I’m thinking of g as representing ALL the gas you ever put in the car, from day 1, not just what you’ve burnt since the last fill-up, but it really doesn’t much matter.) Unless you spend a lot of time driving in reverse, O(g) is a non-decreasing function of g. If the car has burned g gallons and, at the moment, has r gallons remaining in the tank, then a reasonable estimate for the remaining range is O(g)-O(g-r) — i.e., the distance you’ve most recently driven on the amount of gas that remains.