Another Technological Tragedy

by Brian Hayes

Published 20 October 2018

One Thursday afternoon last month, dozens of fires and explosions rocked three towns along the Merrimack River in Massachusetts. By the end of the day 131 buildings were damaged or destroyed, one person was killed, and more than 20 were injured. Suspicion focused immediately on the natural gas system. It looked like a pressure surge in the pipelines had driven gas into homes where stoves, heaters, and other appliances were not equipped to handle the excess pressure. Earlier this week the National Transportation Safety Board released a brief preliminary report supporting that hypothesis.

A house in Lawrence, Mass., burned on September 13, 2018, as a result of a natural gas pressure surge. (Image from the NTSB report.)

A house in Lawrence, Mass., burned on September 13, 2018, as a result of a natural gas pressure surge. (Image from the NTSB report.)

I had believed such a catastrophe was all but impossible. The natural gas industry has many troubles, including chronic leaks that release millions of tons of methane into the atmosphere, but I had thought that pressure regulation was a solved problem. Even if someone turned the wrong valve, failsafe mechanisms would protect the public. Evidently not. (I am not an expert on natural gas. While working on my book Infrastructure, I did some research on the industry and the technology, toured a pipeline terminal, and spent a day with a utility crew installing new gas mains in my own neighborhood. The pages of the book that discuss natural gas are online here.)

The hazards of gas service were already well known in the 19th century, when many cities built their first gas distribution systems. Gas in those days was not “natural” gas; it was a product manufactured by roasting coal, or sometimes the tarry residue of petroleum refining, in an atmosphere depleted of oxygen. The result was a mixture of gases, including methane and other hydrocarbons but also a significant amount of carbon monoxide. Because of the CO content, leaks could be deadly even if the gas didn’t catch fire.

Every city needed its own gasworks, because there were no long-distance pipelines. The output of the plant was accumulated in a gasholder, a gigantic tank that confined the gas at low pressure—less than one pound per square inch above atmospheric pressure (a unit of measure known as pounds per square inch gauge, or psig). The gas was gently wafted through pipes laid under the street to reach homes at a pressure of 1/4 or 1/2 psig. Overpressure accidents were unlikely because the entire system worked at the same modest pressure. As a matter of fact, the greater risk was underpressure. If the flow of gas was interrupted even briefly, thousands of pilot lights would go out; then, when the flow resumed, unburned toxic gas would seep into homes. Utility companies worked hard to ensure that would never happen.

Gasholders looming over a neighborhood in Genoa, Italy, once held manufactured gas for use at a steelmill. The photograph was made in 2001; Google Maps shows the tanks have since been demolished.

Gas technology has evolved a great deal since the gaslight era. Long-distance pipelines carry natural gas across continents at pressures of 1,000 psig or more. At the destination, the gas is stored in underground cavities or as a cryogenic liquid. It enters the distribution network at pressures in the neighborhood of 100 psig. The higher pressures allow smaller diameter pipes to serve larger territories. But the pressure must still be reduced to less than 1 psig before the gas is delivered to the customer. Having multiple pressure levels complicates the distribution system and requires new safeguards against the risk of high-pressure gas going where it doesn’t belong. Apparently those safeguards didn’t work last month in the Merrimack valley.

Cryogenic storage tanks in Everett, Mass., near Boston, hold liquified natural gas that supplies utilities in surrounding communities.

The gas system in that part of Massachusetts is operated by Columbia Gas, a subsidiary of a company called NiSource, with headquarters in Indiana. At the time of the conflagration, contractors for Columbia were upgrading distribution lines in the city of Lawrence and in two neighboring towns, Andover and North Andover. The two-tier system had older low-pressure mains—including some cast-iron pipes dating back to the early 1900s—fed by a network of newer lines operating at 75 psig. Fourteen regulator stations handled the transfer of gas between systems, maintaining a pressure of 1/2 psig on the low side.

The NTSB preliminary report gives this account of what happened around 4 p.m. on September 13:

The contracted crew was working on a tie-in project of a new plastic distribution main and the abandonment of a cast-iron distribution main. The distribution main that was abandoned still had the regulator sensing lines that were used to detect pressure in the distribution system and provide input to the regulators to control the system pressure. Once the contractor crews disconnected the distribution main that was going to be abandoned, the section containing the sensing lines began losing pressure.

As the pressure in the abandoned distribution main dropped about 0.25 inches of water column (about 0.01 psig), the regulators responded by opening further, increasing pressure in the distribution system. Since the regulators no longer sensed system pressure they fully opened allowing the full flow of high-pressure gas to be released into the distribution system supplying the neighborhood, exceeding the maximum allowable pressure.

When I read those words, I groaned. The cause of the accident was not a leak or an equipment failure or a design flaw or a worker turning the wrong valve. The pressure didn’t just creep up beyond safe limits while no one was paying attention; the pressure was driven up by the automatic control system meant to keep it in bounds. The pressure regulators were “trying” to do the right thing. Sensor readings told them the pressure was falling, and so the controllers took corrective action to keep the gas flowing to customers. But the feedback loop the regulators relied on was not in fact a loop. They were measuring pressure in one pipe and pumping gas into another.

The conjectured cause of the fires and explosions in Lawrence and nearby towns is a misconfigured pressure-control system, according to the NTSB preliminary report. Service was switched to a new low-pressure gas main, but the pressure regulator continued to monitor sensors attached to the old pipeline, now abandoned and empty.

The NTSB’s preliminary report offers no conclusions or recommendations, but it does note that the contractor in Lawrence was following a “work package” prepared by Columbia Gas, which did not mention moving or replacing the pressure sensors. Thus if you’re looking for someone to blame, there’s a hint about where to point your finger. The clue is less useful, however, if you’re hoping to understand the disaster and prevent a recurrence. “Make sure all the parts are connected” is doubtless a good idea, but better still is building a failsafe system that will not burn the city down when somebody goofs.

Suppose you’re taking a shower, and the water feels too warm. You nudge the mixing valve toward cold, but the water gets hotter still. When you twist the valve a little further in the same direction, the temperature rises again, and the room fills with steam. In this situation, you would surely not continue turning the knob until you were scalded. At some point you would get out of the shower, shut off the water, and investigate. Maybe the controls are mislabeled. Maybe the plumber transposed the pipes.

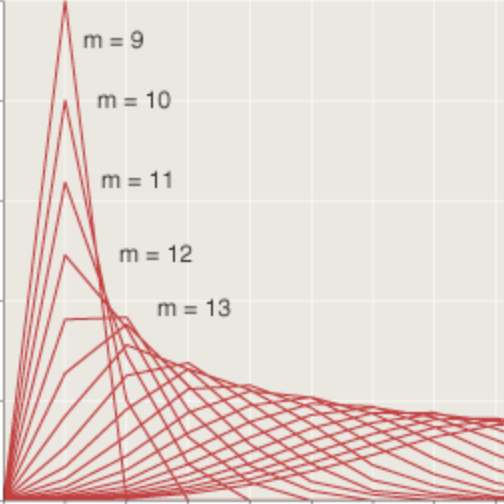

Since you do so well controlling the shower, let’s put you in charge of regulating the municipal gas service. You sit in a small, windowless room, with your eyes on a pressure gauge and your hand on a valve. The gauge has a pointer indicating the measured pressure in the system, and a red dot (called a bug) showing the desired pressure, or set point. If the pointer falls below the bug, you open the valve a little to let in more gas; if the pointer drifts up too high, you close the valve to reduce the flow. (Of course there’s more to it than just open and close. For a given deviation from the set point, how far should you twist the valve handle? Control theory answers this question.)

It’s worth noting that you could do this job without any knowledge of what’s going on outside the windowless room. You needn’t give a thought to the nature of the “plant,” the system under control. What you’re controlling is the position of the needle on the gauge; the whole gas distribution network is just an elaborate mechanism for linking the valve you turn with the gauge you watch. Many automatic control system operate in exactly this mindless mode. And they work fine—until they don’t.

As a sentient being, you do in fact have a mental model of what’s happening outside. Just as the control law tells you how to respond to changes in the state of the plant, your model of the world tells you how the plant should respond to your control actions. For example, when you open the valve to increase the inflow of gas, you expect the pressure to increase. (Or, in some circumstances, to decrease more slowly. In any event, the sign of the second derivative should be positive.) If that doesn’t happen, the control law would call for making an even stronger correction, opening the valve further and forcing still more gas into the pipeline. But you, in your wisdom, might pause to consider the possible causes of this anomaly. Perhaps pressure is falling because a backhoe just ruptured a gas main. Or, as in Lawrence last month, maybe the pressure isn’t actually falling at all; you’re looking at sensors plugged into the wrong pipes. Opening the valve further could make matters worse.

Could we build an automatic control system with this kind of situational awareness? Control theory offers many options beyond the simple feedback loop. We might add a supervisory loop that essentially controls the controller and sets the set point. And there is an extensive literature on predictive control, where the controller has a built-in mathematical model of the plant, and uses it to find the best trajectory from the current state to the desired state. But neither of these techniques is commonly used for the kind of last-ditch safety measures that might have saved those homes in the Merrimack Valley. More often, when events get too weird, the controller is designed to give up, bail out, and leave it to the humans. That’s what happened in Lawrence.

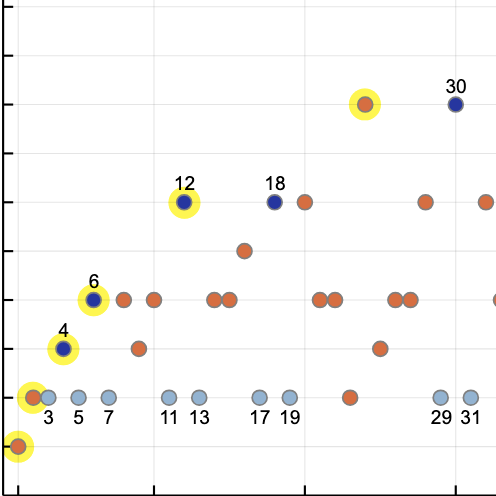

Minutes before the fires and explosions occurred, the Columbia Gas monitoring center in Columbus, Ohio [probably a windowless room], received two high-pressure alarms for the South Lawrence gas pressure system: one at 4:04 p.m. and the other at 4:05 p.m. The monitoring center had no control capability to close or open valves; its only capability was to monitor pressures on the distribution system and advise field technicians accordingly. Following company protocol, at 4:06 p.m., the Columbia Gas controller reported the high-pressure event to the Meters and Regulations group in Lawrence. A local resident made the first 9-1-1 call to Lawrence emergency services at 4:11 p.m.

Columbia Gas shut down the regulator at issue by about 4:30 p.m.

I admit to a morbid fascination with stories of technological disaster. I read NTSB accident reports the way some people consume murder mysteries. The narratives belong to the genre of tragedy. In using that word I don’t mean just that the loss of life and property is very sad. These are stories of people with the best intentions and with great skill and courage, who are nonetheless overcome by forces they cannot master. The special pathos of technological tragedies is that the engines of our destruction are machines that we ourselves design and build.

Looking on the sunnier side, I suspect that technological tragedies are more likely than Oedipus Rex or Hamlet to suggest a practical lesson that might guide our future plans. Let me add two more examples that seem to have plot elements in common with the Lawrence gas disaster.

First, the meltdown at the Three Mile Island nuclear power plant in 1979. In that event, a maintenance mishap was detected by the automatic control system, which promptly shut down the reactor, just as it was supposed to do, and started emergency pumps to keep the uranium fuel rods covered with cooling water. But in the following minutes and hours, confusion reigned in the control room. Because of misleading sensor readings, the crowd of operators and engineers believed the water level in the reactor was too high, and they struggled mightily to lower it. Later they realized the reactor had been running dry all along.

Second, the crash of Air France 447, an overnight flight from Rio de Janeiro to Paris, in 2009. In this case the trouble began when ice at high altitude clogged pitot tubes, the sensors that measure airspeed. With inconsistent and implausible speed inputs, the autopilot and flight-management systems disengaged and sounded an alarm, basically telling the pilots “You’re on your own here.” Unfortunately, the pilots also found the instrument data confusing, and formed the erroneous opinion that they needed to pull the nose up and climb steeply. The aircraft entered an aerodynamic stall and fell tail-first into the ocean with the loss of all on board.

In these events no mechanical or physical fault made an accident inevitable. In Lawrence the pipes and valves functioned normally, as far as I can tell from press reports and the NTSB report. Even the sensors were working; they were just in the wrong place. At Three Mile Island there were multiple violations of safety codes and operating protocols; nevertheless, if either the automatic or the human controllers had correctly diagnosed the problem, the reactor would have survived. And the Air France aircraft over the Atlantic was airworthy to the end. It could have flown on to Paris if only there had been the means to level the wings and point it in the right direction.

All of these events feel like unnecessary disasters—if we were just a little smarter, we could have avoided them—but the fires in Lawrence are particularly tormenting in this respect. With an aircraft 35,000 feet over the ocean, you can’t simply press Pause when things don’t go right. Likewise a nuclear reactor has no safe-harbor state; even after you shut down the fission chain reaction, the core of the reactor generates enough heat to destroy itself. But Columbia Gas faced no such constraints in Lawrence. Even if the pressure-regulating system is not quite as simple as I have imagined it, there is always an escape route available when parameters refuse to respond to control inputs. You can just shut it all down. Safeguards built into the automatic control system could do that a lot more quickly than phone calls from Ohio. The service interruption would be costly for the company and inconvenient for the customers, but no one would lose their home or their life.

Control theory and control engineering are now embarking on their greatest adventure ever: the design of self-driving cars and trucks. Next year we may see the first models without a steering wheel or a brake pedal—there goes the option of asking the driver (passenger?) to take over. I am rooting for this bold undertaking to succeed. I am also reminded of a term that turns up frequently in discussions of Athenian tragedy: hubris.

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 20 October 2018

Converted to Eleventy framework: 22 April 2025

Great post. I too find these technological disaster analyses fascinating. I am a big fan of those airplane disaster tv shows. My wife doesn’t get it and can’t watch as it reminds her of vulnerabilities while flying. Instead, I find them mildly reassuring in that they end with a mention of how they’ve fixed things so the particular accident won’t happen again. However, the real attraction of these shows is the real-life detective stories. Perhaps they should start another series to expand beyond just airplane crashes. There have been a few special shows to treat one-off disasters but I’m sure there are more accidents they could cover, such as the recent one you mention here.

Fantastic post… when that story originally broke I couldn’t understand why it wasn’t getting more widespread coverage, and went days with only vague explanation. I imagined that either press outlets had agreed to keep it a tad hush-hush so as not to panic millions of people on natural gas, or else it was a case of some distracting Trumpism focus at the time (I don’t remember) sucking all the news oxygen out of the air for other stories!

“Hubris” is a good word, though the word that quickly came to my mind, that scientists dread, was simply “Ooops.” And as far as driverless cars go, I think we’ll eventually get there… but the road to success will be littered with tragedies and interruptions… and a lot of “ooops.”

The Chemical Safety Board produces some pretty fascinating videos that reconstruct the chains of events that led to large industrial accidents in chemical plants. The videos normally conclude with recommendations for voluntary actions industry can take to prevent similar incidents in future. They basically summarize the results of detailed investigations by CSB agents. The videos are well produced and, apart from those accidents that involve injury or death, they are quite entertaining if you happen to be in a forensic frame of mind. Here’s an example of one of their videos: https://youtu.be/_icf-5uoZbc

The reason contractors, rather than regular employees, were upgrading the lines is that Columbia Gas and parent National Grid have locked out 1,250 union workers after negotiations failed. It cannot be proved that experienced workers would have been able to avoid either this disaster or a similar issue in Woburn, MA a week later, but it is rather suggestive that both repairs were done by temporary workers hired during the dispute. See Boston Globe and US News . That said, the basic design of the system is flawed. There are breakers, fuses and overvoltage protectors on electrical distribution system and at the entrance panel; there should be similar protection for gas distribution.

Please correct me if I’m wrong, but I don’t believe that Columbia Gas is owned by National Grid; it is listed as a subsidiary of NiSource, which as far as I know has no ownership connection with NG. (National Grid does supply electricity in Lawrence and the Andovers, but not gas.) Columbia was formerly the Bay State Gas Company. See history and map of service areas.

The locked out NG employees have just been cleared by their unions and others to do temporary work restoring service in the Merrimack Valley. (See MassLive story.)

The situation in Woburn is different: That is indeed NG territory, and at the time of the incident the work was being done by nonunion replacements and management employees.

I don’t know whether the use of contractors might have contributed to the problems in South Lawrence, nor do I know whether there are any labor-management disputes going on there. Maybe the full NTSB report will address the question.

As for the differences between electrical and gas systems, I agree. One reason for those differences is that the electrical grid operates on a millisecond time scale; when there’s a fault, there’s just no time to call a supervisor. The protective systems are automated by necessity. Gas moves much slower, which seems to have led to the belief that you could afford to wait for human intervention. Recent events suggest otherwise.

Super interesting read, thank you for this!

I note that there is further lively discussion of all this on Hacker News.

I’m interested to know I’m not the only person who reads NTSB reports. I have a file drawer full of old paper reports as well as a trove of PDFs. This disaster was preventable like all disasters. We need to learn these lessons that we keep forgetting.

People like to call this “a technological disaster”, like they like to call hurricane Katrina “a natural disaster”. But especially in the US, infrastructure is often at shocking maintenance levels, warnings are ignored for half a century, and then when disaster strikes, you need to blame the actual cause - not technology or nature, but governance.

(I’m from a country where pretty much all energy is gas based, most of the country is below sea level and strong storms regularly happen, and guess what? The choice was made to put governance and money towards the problem and nothing bad happens).

I would caution against this hubris. I was at one of the two projects on which a flaw in the design and construction of a type of structural steel connection (the “moment frame”) was discovered as a consequence of the 1995 Northridge earthquake. The structural engineering firm that had designed the structure gave a tour of the damaged connections to a group of Japanese structural engineers, who walked and looked and said (quite literally and obviously) “tsk tsk tsk.” They said that such a problem could never happen in Japan where design standards are superior and construction and inspection are at a much higher standard. Almost precisely one year later, the Kobe earthquake, of the same magnitude as the Northridge temblor, provided a tragic demonstration that the Japanese design and construction methods were not so superior after all.

Correction: that should be the 1994 Northridge earthquake.

I just want to reiterate the parent comment that by framing what happened in Lawrence in terms of technology, you end up downplaying the mistake involved, which appears to be gross negligence. It truly would be hubris to believe that a more sophisticated control loop would be less susceptible to gross negligence rather than more.

One of the ironies of the whole “self - driving car” business is that, from the perspective of transportation infrastructure, it’s unclear that such a car solves more problems than it creates. Why not trains? And it highlights the question of whether infrastructure planning is really compatible with strong profit motives, much less the stock market speculation endemic to the Silicon Valley players involved.

Thanks! This was really well written.

Your anecdotes remind me of the Russian officer Petrov who ignored the missle alarm and effectively avoided a nuclear war with US. I think Hannah Fry talks about it in her book.

“Control theory and control engineering are now embarking on their greatest adventure ever: the design of self-driving cars and trucks. Next year we may see the first models without a steering wheel or a brake pedal—there goes the option of asking the driver (passenger?) to take over. I am rooting for this bold undertaking to succeed. I am also reminded of a term that turns up frequently in discussions of Athenian tragedy: hubris.”

But there is one difference. We are quite used to the cynical fact that human driven cars kill thousands of people every year without complaining too much about it. While no one is used to a similar fact in any other industrial infrastructure. Thus, computer drivers have to compete on that level. If automated cars will kill LESS people than human driven ones, it will already regarded as a success, even if some mayhem surely is bound to happen with automated cars, too. It seems that this is a very special case of risk assessment, possibly tracing back even to pre-car times, since horses were not that safe all the time either and there was historically always a risk of fatal accident associated with travelling.

Well, maybe. If you look over to medical technology, we see that even a single “charismatic death” of a terminal patient can block further experiments for a strikingly long time. That said, AIUI we’ve already had the first few deaths based on driverless cars, and they were basically… speed bumps, to the overall endeavor. A more dramatic disaster (say, a multi-car pileup or a hacking incident) might well seriously interfere with the advent of driverless cars.

It’s not so much that humans are bad at estimating probabilities, as that they have a number of “wet-wired” algorithms for dealing with risk, that aren’t necessarily appropriate for modern contexts.

Regarding TMI…a couple clarifications. It is a Pressurized Water Reactor, meaning the core is always supposed to be covered with water. There is a device called the pressurizer which has a steam bubble in it that maintains the pressure in the system. (Upwards of 2000psi). The problem was the level transmitter in the pressurizer, operators turned off the safety injection system as they thought the pressurizer was going to become full which could result in over pressurizing the reactor coolant system. The opposite happened…pressurizer emptied, transferring the steam bubble to the reactor. Operators then realized the pressurizer went low and turned on the safety injection system. Unfortunately as the reactor got uncovered due to steam decay heat created more steam forcing more water out of the reactor. Pressurizer level rose (due to water displaced from reactor being forced into it) operators turned off safety injection again thinking they had recovered not realizing the reactor core was uncovered.

I’m reminded of Richard Cook’s 1998 paper on How Complex Systems Fail. It provides a nice framework for thinking through our defenses of these failure modes.

Interesting read but a little off the mark. I would urge you to continue to research this subject and talk with Gas Pipeline Engineers to discuss the tools and control systems at our disposal. One good resource is the America Gas Association and PHMSA. As a Supervising Gas Engineer this terrible event hits very close to home for me and my staff. We have discussed the NTSB report at length and how to avoid the same mistakes in our own designs. We all take the safety of the community we serve very seriously and are extremely saddened by individuals who think they “know” how to do our jobs. At the end of the day this event was caused by poor communication and understanding of where the regulator station control lines were placed and how changes to the gas system would change how the regulators operated. This is a lesson that hits very close to home and heart as the Gas Utility business is a very small community. I urge you to continue to seek to understand how these systems are built and how they are operated before passing judgement on how you perceive these systems should be designed. Sadly human error and poor attention to details of this replacement project cost this community a great deal. My only hope is that my peers learn from this tragedy and don’t make similar mistakes.

Thanks for your comments. Do you know of a published account of the accident that gives a more detailed or more accurate explanation? Perhaps something in the trade press? I would be grateful for any pointers.

If you might be interested in writing up your own explanation of the accident, please get in touch with me directly (brian@bit-player.org). I would be happy to consider publishing it here.

I don’t have any additional information other than the NTSB report and my years of experience designing and maintaining gas delivery systems. The AGA and GTI offer basic system designs. Your assumption is that this gas system has supervisory control offering feedback and direct control of the system from a Gas Control room more akin to a control system of a large chemical or power plant. That is not the typical case for Gas Distribution systems. SCADA is used mainly for monitoring of system pressures. Control of typical gas systems in done using spring or pilot operated regulators with set springs and control lines providing pressure information back to the regulator. Fisher Controls Systems actually offers a good summary of how this works in their regulator selection and sizing catalog. Based on what I have read in the NTSB report the downstream control lines were not transeferred to the new downstream distribution main, thus the regulators received no feedback telling them the close when downstream set pressure was reached. To assume the system has a manual override shows a lack of knowledge of how these systems at designed.

Thanks for the further input.

Just to clarify: When I mentioned the possibility of “an automatic control system with … situational awareness”, I was not talking about the SCADA system, nor was I suggesting that operators in Columbus should have been able to close valves in Lawrence. I was thinking of a more sophisticated pressure regulator replacing the existing hardware. As you point out, pressure regulators are largely simple, mechanical devices that sense line pressure and set an inlet throttle valve accordingly. When designs of this kind were introduced in the 1950s, they were the best we could do. We may be able to better now. A regulator could sense when the system is not responding as expected, and then shut the system down.

There’s an analogy in the electric power infrastructure. When a tree limb brushes against a power line, a device called a recloser interrupts the electric current briefly, but then tries closing the circuit again in case the problem was a momentary one. This avoids many nuisance power outages. But the recloser only tries reclosing two or three times before giving up and choosing safety over convenience. There’s no reason the gas system couldn’t be operated on similar principles: If the pressure keeps falling even as the inlet valve is opened wider, it’s a reasonable guess that something is seriously wrong, and a reasonable action is to shut the system down.

I would also like to say, in case I wasn’t clear the first time around, that my aim in writing on this subject is not to assess blame. Mistakes were made in Lawrence–that’s beyond dispute–but I don’t known who made them and I don’t think finding culprits is a useful way of preventing recurrences. We all make mistakes. I would like to see systems designed so that we can make mistakes without killing people or burning down neighborhoods.

In the weeks since I wrote this piece, we have witnessed another example of a control system that may have caused a disaster in spite of the best efforts of human operators. The crash of Lion Air flight 610 in Indonesia appears to have been caused by a control system responding to an erroneous sensor signal, which the pilots were not able to override. This explanation of the accident is a only preliminary hypothesis, but if it turns out to be correct, we may have another case where a little more situational awareness in the control system could have saved lives.

Great summary Paul!

From the timeline, you can see that the manual over-ride system was not up to the challenge:

What we have here is a system that takes 5 minutes develop an explosive pressure level, but 24 minutes to shut down after an overpressure alarm. OK, perhaps it would have been quicker than 24 minutes if staff hadn’t also been responding to the explosion, but no matter: it was > 5 minutes! (I hope this isn’t read as only a criticism of the gas workers in Lawrence. There’s always much more to it than that.)

Paul, I hope you will revisit the topic when the final NTSB report comes out. Thanks for this!