The Thrill of the Chase

by Brian Hayes

Published 11 July 2010

How I love to go out hunting on a bright Sunday morning—though it’s not my style to shoot furry/feathery/finny animals. My game is to get up early and stalk a wily factoid.

A posting from Mat Roberts, whose blog I’ve recently discovered, sent me out this morning to chase down a passage in How Long Is a Piece of String, a book by Rob Eastaway and Jeremy Wyndham:

The concept here seemed familiar, but the term “Lincoln Index” was new to me. Lincoln who? What index?

Google offered some useful clues. (Also a generous helping of false scents—books about Honest Abe that happen to have an index.) Without even clicking on a link I had the general context:

The Lincoln Index provides a way to measure population sizes of individual animal species. It is based on a capture/mark/ recapture method…

So we’re talking ecology and population biology. The original idea was not to catch the same typo twice but to catch the same furry/feathery/finny creature twice. Interesting. However, the first couple of web pages that Google sent me to (here and here) told me nothing about Lincoln. And, oddly, I found no Wikipedia entry for “Lincoln Index.” If it’s not in Wikipedia, does it exist?

With a little more poking around, I stumbled upon another clue that seemed promising: a mention of “the Lincoln-Pearson equation for estimating population size.” I was still in the dark about Lincoln, but Pearson is quite a familiar figure. Surely that’s Karl Pearson, the pioneering statistician, who did much of his work in the biological sciences and might very well have come up with a scheme for estimating population sizes.

Back at Google, though, searching for “Lincoln-Pearson” turned up nothing pertinent other than the page I’d come from (though I did learn that Karl Pearson “read in chambers in Lincoln’s Inn” during his early years studying law).

More beating the bushes. Eventually I realized I had wandered into a blind alley. Somebody needs to hire a pair of proofreaders: The formula is not “Lincoln-Pearson” but “Lincoln-Petersen.” Try those names at Google and you’ll get an abundance of useful pointers. (You’ll also learn that Abraham Lincoln died in Petersen’s Boarding House, across the street from Ford’s Theater. Google is not just a search engine but also a coincidence engine.)

The particular web page where I finally got the correct names (notes for a course at North Carolina State University) explains that capture-mark-recapture methods

are used extensively to estimate populations of fish, game animals, and many non-game animals. The approach was first used by Petersen (1896) to study European plaice in the Baltic Sea and later proposed by Lincoln (1930) to estimate numbers of ducks. Petersen’s and Lincoln’s method is often referred to as the Lincoln-Petersen Index, even though it is not an index but a method to estimate actual population sizes. (Should it not be the Petersen-Lincoln Estimate?)

I decided to pursue Petersen first—and immediately ran into a few further bibliographic brambles. Some citations spell the name “Petersen” and others “Peterson.” Some give the initials “C. G. T.” and others “C. G. J.” or “C. J. G.” The date might be 1895 or 1896 or 1897. Here’s what I believe to be a correct citation:

Petersen, C. G. J. 1896. The yearly immigration of young plaice into the Limfjord from the German Sea. Report of the Danish Biological Station to the Home Department 6:1–48.

Wikipedia identifies our elusive author as Carl Georg Johannes Petersen (1860-1928). He was a founder of the Danish Biological Station, which was not in fact a station but a mobile laboratory—a decommissioned naval vessel that was moved around from year to year. In 1895, Petersen took the station to the Limfjord, a chain of bays, lakes and channels cutting across the Jutland peninsula in northern Denmark. There he studied the plaice fishery. (Back to Wikipedia: “The European plaice is a right-eyed flounder belonging to the Pleuronectidae family.” But let’s not get started on right-eyed and left-eyed flatfish, or we’ll never get to the end of this.)

Petersen’s report is available online, scanned from a copy belonging to the library of the Marine Biological Laboratory and Woods Hole Oceanographic Institution, and hosted by the Biodiversity Heritage Library of the Internet Archive. A second surprise: The report is written in English. But on reading through it I find only vague and murky connections between the work Petersen reports and the mark-recapture method of estimating populations. There’s nothing resembling the E1E2/S formula.

Petersen does describe a series of capture/mark/recapture experiments. A few hundred plaice were caught and marked by attaching numbered buttons, then put back in the water. Fishermen who recaught the labeled fish in later months were asked to report them. But the purpose of this study was not to estimate the total population; instead, Petersen used before-and-after measurements of the marked fish to estimate their growth rate.

In a much larger experiment, some 82,580 plaice (somebody must have counted them!) were transplanted into the fjord, and 10,900 of the fish were marked by having a hole punched in their dorsal fin. The number of marked fish was recorded as the plaice were caught during the coming year. It’s not clear whether the aim of this project was to estimate the total population, but in any case it didn’t work. The fraction of marked fish in the transplanted batch was about 1/7, but the marked fraction in the subsequent catches was 1/5. Petersen remarks, “This result is very strange,” and I have to agree.

When Petersen did try to estimate the plaice population, he didn’t rely on a recapture scheme. He went out with seine nets designed to dredge up every bottom fish in a measured plot, then extrapolated from the density of fish per unit area.

The whole report is fascinating fishy stuff, but it leaves me wondering just how Petersen came to be given credit for the resampling idea. As far as I can tell, it’s not to be found in this paper.

Having chased down Petersen, I turned back to Mr. Lincoln. Without much trouble I was able to identify the work in question:

Lincoln, F. C. 1930. Calculating waterfowl abundance on the basis of banding returns. United States Department of Agriculture Circular 118:1–4.

The author was Frederick C. Lincoln, who was bird-bander-in-chief in the U.S. for some 25 years. The agency he founded has since migrated from the Department of Agriculture to the U.S. Geological Survey and become the Bird Banding Laboratory.

The author was Frederick C. Lincoln, who was bird-bander-in-chief in the U.S. for some 25 years. The agency he founded has since migrated from the Department of Agriculture to the U.S. Geological Survey and become the Bird Banding Laboratory.

Google returns hundreds of works that cite Lincoln’s paper (including some quite far afield from population biology). But tracking down the USDA document itself was not so easy. If the USDA has it online, I wasn’t able to locate it. But a search of WorldCat eventually turned up an archive in the Hathi Trust Digital Library where you can page through Lincoln’s pamphlet in a copy scanned by Google at the University of Minnesota library.

Lincoln gives only a brief and informal account of the recapture idea, but the basic principle is stated clearly enough:

If in one season 5,000 ducks were banded and yielded 600 first-season returns, or 12 percent, and if during that same season the total number of ducks killed and reported by sportsmen was about 5,000,000, then this number would be equivalent to approximately 12 per cent of the waterfowl population for that year, which would be about 42,000,000.

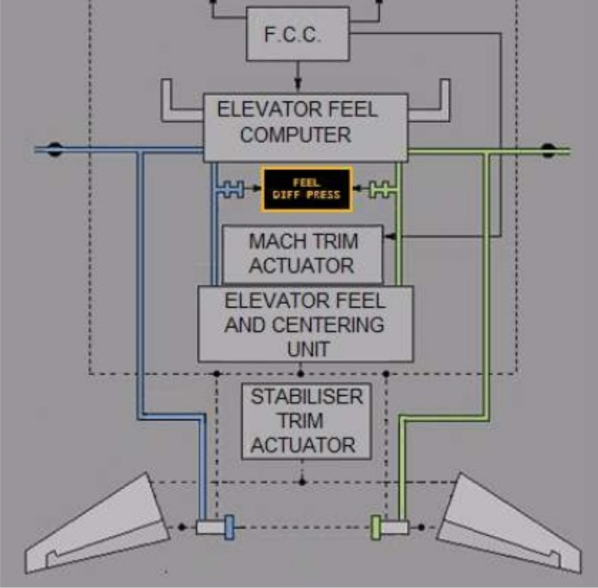

It’s not hard to translate this formula from the language of duck hunters into the language of proofreaders. The first reader finds 5,000 typos and the second spots 5 million; 600 of these errors are common to both lists, and so the total number of typos is:

![]()

So that’s my reward for a morning spent out hunting: 42 million typos.

Does Frederick Lincoln deserve credit for the Lincoln Index? I’d say he has a good claim, except that Pierre Simon de Laplace had the same idea more than a century earlier. In 1802 Laplace applied his method to estimating the (human) population of France. But maybe that’s a story for another Sunday morning.

Epilogue. This is not really a story about typos, or about fish and ducks. It’s about finding things—about the phenomenal ease of chasing facts on the world wide web. Does a marked fish have any hope of escaping recapture there?

Responses from readers:

Please note: The bit-player website is no longer equipped to accept and publish comments from readers, but the author is still eager to hear from you. Send comments, criticism, compliments, or corrections to brian@bit-player.org.

Publication history

First publication: 11 July 2010

Converted to Eleventy framework: 22 April 2025

Unfortunately yes. I was trying to recover the lyrics of a song I learned about the U.S. Presidents as a kid, to the tune of “Yankee Doodle”. There are a few very different versions on line, but the one that I recognize goes only up through Grover Cleveland, yet I remember it through Eisenhower. The rest is apparently lost, at least until someone bothers to put it online.

I don’t know about the population estimate, but the point you’re trying to make at the end about this being a simple calculation that obviously recovers the total number of errors is wrong. I think you must be thinking of the inclusion/exclusion principle. Assuming that the two copyreaders find all the errors between them, the total errors will be exactly E1+E2-S. There’s no reason for multiplication or division to come into this.

Ironically, there’s a typo in your article’s penultimate paragraph: the sentence “I’d say he has a good claim, except that Pierre Simon de Laplace more than a century earlier.” is incomplete.

Thanks for another entertaining post!

Interesting article. I wonder how well this kind of thing could apply to software quality results (and in particular for my interests, security vulnerabilities). I.e. if Lincoln finds E1 defects and Petersen finds E2 defects, with S in common, can we safely assume that there are E1E2/S defects?

I don’t think so, because testing for vulnerabilities is generally not a simple random sampling mechanism. Much depends on the method used for testing - static analysis versus fuzzing versus manual inspection or grey-box testing for example.

Certain testing methods will typically only find certain classes of vulnerabilities for example, and with varying degrees of effectiveness. Fuzzing (pseudo-random input with varying degrees of structure) might be very good at uncovering buffer overflows and other parsing or data-plane/semantic-boundary transition problems, but it typically doesn’t do as well at identifying privilege or permission bypass issues.

On the other hand, if you had two different teams fuzzing your firewall for example, and they came up with different results with some subset of identical results, it might make sense to apply the Lincoln index and estimate the total number of defects that could eventually be discovered by fuzzing, since fuzzing is essentially a random selection process.

The other interesting thing about fuzzing is that it tends to be subject to diminishing returns, so you might also be able to estimate the total “findable” number from the decline in the rate of identification. So perhaps the most useful upper bound would be to take E1 and E2 to be the rate-adjusted total-findable estimates (instead of the currently identified count) for the two different teams.

Another case where the selection process might not have been random is Petersen’s fish experiment. If 1/7th of a fish population had holes punched in their dorsal fins, and 1/5th of later catches were found with punched dorsal fins, it kind of suggests that punching holes in dorsal fins might make fish easier to catch. Either that, or adolescent European Plaice are susceptible to conformal peer pressure…

@Greg Wilson: Thanks for catching that. It was not a planted test for proofreaders.

@Greg: If both readers find all the errors, then E1=E2=S, and also E1+E2-S = E1E2/S. But the situation we’re interested in is one where we don’t know how many errors really exist; all we know is how many errors the two readers found, and how many of those were found by both. Your formula assumes that there are no other errors. The Lincoln/Peterson/Laplace formula assumes that both readers have gathered a random sample of errors from the unknown population. By the way, a corner case is when the two error sets have no overlap at all — i.e., S = 0. One response to this situation is to say that with no overlap we have no upper bound on the total population. But it’s also possible to take a less dire view, and use a formula along the lines of:

(E1+1)(E2+1)/(s+1) - 1.

@John Cowan: The whole world is counting on you to fill in that last missing piece of the community memory bank!

Phil Brass wrote:

That’s the question raised in the blog posting by Mat Roberts that launched all this.

Of course! Piercings. How could I have missed the obvious?

Okay, but what about three or more samples? I suppose the limit is catch-and-release fishing, when you could unluckily catch the same fish over and over again.

(But of course you should add a new mark to the fish every time you release it.)

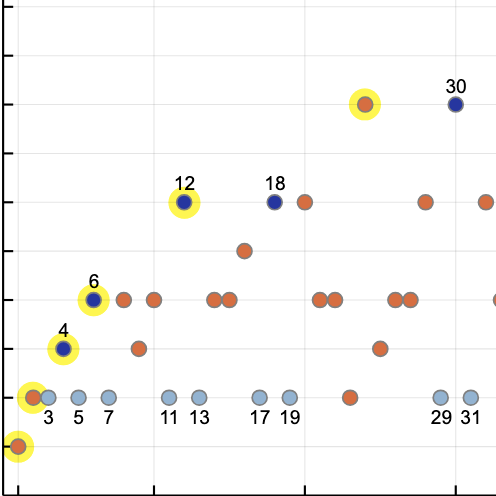

When John D. Barrow looks at the number of *unfound* errors, he uses the formula E1E2/S for the total number of errors. Here’s a quotation from p. 83 of his book “Impossibility” (Oxford: Oxford University Press, 1998):

- - - - -

Suppose that two editors, Jack and Jill, independently read a long newspaper article supplied by one of their journalists. Jack finds A typing errors, whilst Jill finds B typing errors. They compare copies and discover that they both found the same error on C occasions. How many errors do you expect to remain, unfound, in the article?

Let us suppose that the total number of errors in the article is E. This means that the number that have yet to be found equals E-A-B+C. The last factor of +C is so that we don’t double count the errors that Jack and Jill both found. Now, if the probability that Jack spots an error is p, and the probability that Jill spots an error is q, then we expect that A=pE, B=qE, and C=pqE, because they search independently. So, AB=pqE x E; hence AB=CE. Now we have the answer: the number of unfound errors equals E-A-B+C=AB/C - A - B + C, where we have replaced the unknown quantity, E, by AB/C. rearranging our formula, we have shown that the number of unfound errors is equal to (A-C)(B-C)/C; that is,

Number of unfound errors=(Number found only by Jack) x (Number found only by Jill)/(Number found by both Jack and Jill)

- - - - -

I was first struck by the ease of finding things out on the web a few years ago, while working on a physics assignment in college with my apartment-mate. We were sitting in my room, me at my computer, when he asked what the half life of a particular isotope of molybdenum was, and I entered it directly into a url prefixed with “en.wikipedia.org/wiki/”.

Our conversation then turned to how I couldn’t have found the answer faster had he handed me a book and told me what page to look on, let alone if we had needed to go to a library or arrange for a reference to be sent from another library!

Hi,

How can we find the expected number of defects when there are more than 2 tester?

Regards,

Sachin.

I’ve always known this as the “Petersen Method” and used it (or derivatives) for epidemiological problems such as assessing the completeness of notifiable disease reporting systems, the exhaustivity of case-finding methods, and estimating the numbers of commercial sex workers in towns and cities. It has a known bias towards estimation and the Seber Estimator:

[(E1 + 1)(E2 + 1) / (S + 1)] - 1

is preferred.

The simplicity of the arithmetic hides the complications of doing data collection well. The Petersen and Seber estimators assume (1) that the population is closed (e.g. the number of errors is constant) - this can also mean that the population is both closed and “well defined” (e.g. the two proof-readers have the same version of the text), (2) all errors are equally catchable by both proof-readers, (3) detected errors can be matched, and (4) detection of an error by one proof-reader is not influenced by the detection of the same error buy the other proof-reader. This is, I think, more stringent than two simple random samples mentioned above (that would cover (2) and (4) only). It may be easy (I have my doubts) to meet these assumptions in the context of proof-reading but is very difficult in epidemiological applications. In my experience, capture-recapture studies are often flawed (all of mine have been!). It is then a matter of identifying violations of assumptions and their likely effect in terms of the magnitude and direction on the final estimate.

There are several methods that use multiple testers / multiple lists. John might be interested in:

Schnabel, Z. E. (1938), “The Estimation of the Total Fish Population of a Lake”, American Mathematical Monthly, 45, 348–352.

which proposes an extension to the Petersen method using cumulative marking using a single mark (i.e. no need for multiple marking or different marks for different testers).

Good summaries of abundance estimators for capture-recapture data can be found in many textbooks. Krebs’ “Ecological Methodology” is well regarded.